Data drives decisions, and ensuring its quality is crucial for your success. When you harness clean and reliable data, you empower your organization to make informed choices and uncover valuable insights. In this blog post, we’ll explore best practices that can enhance your data quality, enabling you to avoid costly mistakes and inefficiencies. By integrating these strategies into your workflow, you’ll not only elevate your data’s reliability but also strengthen your overall operations. Let’s probe the necessary practices for maintaining high data quality.

The Importance of Data Quality

For anyone who has ever sifted through piles of information, the value of data quality becomes immediately clear. In today’s digital age, you’re likely surrounded by a wealth of data from numerous sources, and the accuracy and reliability of that data can make all the difference. Imagine relying on flawed data to make critical business decisions, or worse, to guide your strategic vision. Poor data quality can lead to misguided actions and ineffective strategies, ultimately putting your organization’s goals at risk. Moreover, a lack of clean data can undermine your reputation with stakeholders, as they begin to question the validity of your findings and the decisions that stem from them.

For example, consider a retail company that bases its inventory decisions on inaccurate sales forecasts due to poor data quality. If the data fails to reflect actual customer preferences or purchasing behaviors, the company might overstock items that are seldom bought while neglecting popular products. This not only results in wasted resources and lost profits but also affects customer satisfaction. When customers can’t find the products they want, their loyalty may wane, pushing them towards competitors. Thus, the implications of poor data quality extend beyond immediate financial losses to long-term harm to your organization’s performance and market standing.

For businesses in highly regulated industries, the consequences of poor data quality can be even more severe. Compliance failures stemming from inaccurate data can lead not just to financial penalties but also to legal issues and reputational damage. Ensuring that your data is clean and trustworthy is not merely a technical issue; it is a strategic imperative. If you don’t prioritize data quality, you risk jeopardizing your entire operation and its future growth.

The Benefits of High-Quality Data

Data quality is the bedrock of effective decision-making and operational excellence. When you prioritize high-quality data, you enhance your ability to derive meaningful insights that inform strategic initiatives. High-quality data enables you to identify trends, predict customer behavior, and make informed business decisions that align with your organization’s goals. The clarity that comes with reliable data allows you to formulate strategies with confidence rather than relying on guesswork.

Data-driven organizations that foster a culture of data quality find themselves at a distinct advantage in the marketplace. Not only are they better equipped to understand their customers’ needs, but they also can adapt to changes in the market with agility. With accurate data as a foundation, your team can experiment with new ideas, develop innovative products, and refine marketing strategies that resonate with consumers. This leads to increased customer satisfaction and loyalty, which translates to better sales performance and higher revenue. Ultimately, high-quality data is a driver of competitive advantage, allowing you to stay ahead in a rapidly evolving business landscape.

Quality data isn’t just about accuracy; it’s also about timeliness and relevance. In order to fully harness the benefits of high-quality data, you should conduct regular data audits and maintenance, ensuring that the information you rely on is not only correct but also current. In a world that is constantly changing, having access to reliable and high-quality data empowers you to make smarter decisions and to seize opportunities as they arise, turning potential challenges into growth prospects.

Identifying Data Quality Issues

If you’ve ever encountered discrepancies in your data or had to spend hours rectifying errors, you understand how crucial it is to identify data quality issues early on. Ensuring the integrity of your data is not just an operational task; it’s fundamental to the successful decision-making processes that drive your organization. You might be wondering, what are the common problems that affect data quality? Understanding these issues is the first step towards establishing a robust data management strategy that can bolster your organization’s overall effectiveness.

Common Data Quality Problems

Quality data is contingent upon various factors, and several common problems can arise that ultimately compromise its reliability. One major issue is data duplication, where the same record exists multiple times within your system. This overlap can create confusion, leading to inconsistent reporting and misguided conclusions. Imagine making strategic business decisions based on duplicated data sets; the subsequent actions could be detrimental. Reviewing your data regularly for duplicates is crucial in maintaining a clean database.

Another prevalent issue is inaccurate or incomplete data. This can occur from human errors during data entry, system migrations, or even integration challenges between disparate systems. Inaccurate data can lead to misguided insights, potentially costing your organization time and resources. To combat this, it’s imperative to implement validation rules and automated checks to catch errors before they make their way into your systems.

Lastly, data inconsistency arises when the same data element is defined differently across systems or departments. For instance, if one department refers to customer satisfaction as a percentage while another uses a numeric value, the resulting analysis will be futile. Standardizing definitions and creating a data glossary can help eliminate such discrepancies, leading to a unified view across your organization.

Data Profiling and Analysis Techniques

Common strategies like data profiling and analysis techniques are imperative in the quest for high-quality data. By subjecting your data to rigorous profiling, you’re taking that vital first step in identifying its current state and uncovering potential issues. Data profiling examines the structure, content, and relationships within your datasets, giving you insight into data accuracy, completeness, and consistency. Through this process, you can assess anomalies and discover patterns, which are crucial for making informed choices. The mere act of profiling your data can help you pinpoint areas that may require remedial action and helps create a foundation for ongoing data management efforts.

Quality data profiling not only clarifies the abnormalities within your databases, but it also lays the groundwork for implementing effective data governance policies. By engaging in a continuous cycle of profiling and analysis, you’re ensuring that any new data being integrated into your systems adheres to the standards you’ve established. The insights gained from these analyses can inform strategies that better align your data management processes with your organizational goals, ultimately leading to improved decision-making.

When you take the time to engage in data profiling and analysis techniques, you empower yourself to proactively address data quality issues before they snowball into larger problems. Quality insights can turn into a strategic asset that drives your organization forward, making it imperative for you to prioritize these practices as part of your data quality management efforts.

Data Validation and Verification

Keep in mind that ensuring the quality of your data is a continuous process, and incorporating reliable validation methods is an imperative aspect of that journey. One of the most efficient ways to maintain clean and accurate data is to leverage automated data validation methods. These methods harness technology to track and evaluate data entries against established rules and criteria, effectively filtering out errors before they can impact your analysis or decision-making. By integrating automated validation into your data workflow, you can significantly reduce the manual effort required and eliminate human error from the process, allowing you to concentrate on deriving valuable insights from your data rather than simply correcting it.

Any organization looking to implement these methods should start by identifying key data quality rules tailored to their specific needs. Common validation techniques include checking for consistency, accuracy, completeness, and uniqueness. For example, automated systems can be programmed to flag any duplicate entries, identify records that do not conform to formatting guidelines, or highlight discrepancies in numerical data that exceed defined thresholds. By employing these smart systems, you not only enhance data integrity but also free up valuable resources within your team, paving the way for a more efficient data management process.

In addition to these fundamental techniques, you might consider utilizing machine learning algorithms to develop more sophisticated validation processes. These algorithms are capable of learning patterns in your data and can adapt over time, improving the accuracy of your validation steps as they encounter new information. The key is to pair these advanced tools with ongoing collaboration across departments, fostering a culture of data quality awareness throughout your organization. Ultimately, automated data validation methods can serve as a powerful ally in your quest for reliable and trustworthy data.

Manual Data Verification Techniques

Verification is another crucial step in ensuring data integrity, and while automation offers many advantages, the human touch remains invaluable in certain contexts. With manual data verification techniques, you engage your team in the process of meticulously reviewing data entries and confirming their accuracy. This approach often complements automated methods, where complex or context-dependent validations may benefit from human judgment. Whether it’s cross-referencing records, validating data against external sources, or conducting routine audits, these techniques can help identify anomalies and ensure the reliability of your data collection efforts.

Verification methods can include hands-on techniques like sample checks, where team members take a subset of data and analyze it manually for discrepancies. Additionally, it’s vital to establish clear guidelines for these checks, ensuring that each team member understands the expected standards of data quality and the importance of their role in the verification process. Collaboration is key here; working together allows your team to identify common issues and share insights gleaned from their individual review experiences. By maintaining regular communication and sharing best practices, you cultivate a culture of data diligence that benefits your entire organization.

With manual data verification techniques, you have the opportunity to foster deeper relationships with your data. Engaging with your data rather than simply relying on automated processes lets you glean invaluable insights that technology might overlook. It’s this combination of automated methods for scalability and the nuanced understanding from manual verification that truly enhances data quality. Do not forget, it’s not enough to simply collect data; you need to nurture it. By investing time and effort into both validation and verification, you set the foundation for a robust data management strategy that will pay dividends in the long run.

Data Normalization and Standardization

The Importance of Data Consistency

Many organizations today are flooded with data from various sources, including customer interactions, social media, and internal operations. This data, however, can often be inconsistent, leading to confusion and inaccuracies. When you think about data integrity, remember that consistency is the cornerstone of reliability. If your records contain differing formats, varied units of measurement, or conflicting terminology, not only does this hinder your ability to derive meaningful insights, but it also compromises the decisions you make based on that data. By ensuring consistency in your data, you create a unified framework that supports clarity and enhances the decision-making process.

To achieve this state of uniformity, organizations must invest in data normalization and standardization practices. These processes allow you to align data across different systems and sources, making it easier to analyze and utilize. The goal here is to eliminate discrepancies and ensure that all datasets adhere to a defined format. When data is consistent, you can confidently trust the insights derived, whether you’re working on customer segmentation, product development, or market analysis. Ultimately, the importance of data consistency cannot be overstated—it empowers you to operate from a place of certainty rather than guesswork.

Moreover, the impact of inconsistency extends beyond internal processes; it can affect customer relationships and overall business reputation as well. Imagine sending out marketing materials based on faulty data, resulting in miscommunication with your customers. The fallout could be significant and can lead to a loss of trust. By prioritizing consistency, you foster a coherent narrative and ensure that every interaction points back to accurate, reliable data. In this ever-evolving digital landscape, where rapid decision-making is crucial, the ability to rely on consistent data will allow you to stay agile and responsive to your customers’ needs.

Techniques for Normalizing and Standardizing Data

One effective approach to achieving data normalization and standardization is to implement a systematic framework. This can involve a number of techniques such as data profiling, where you assess the quality of your existing data and identify inconsistencies. In addition, performing data deduplication helps you eliminate duplicate records that often cloud analysis and can lead to erroneous conclusions. Furthermore, employing regular expressions can be a powerful tool to automate format conversions, ensuring that addresses, dates, and other fields adhere to the same standards throughout your dataset.

Another technique involves the establishment of specific data entry protocols, where user guidelines dictate how information should be recorded. By conditionally formatting fields in your databases and implementing dropdown lists for selections, you reduce the likelihood of human error. Standardizing naming conventions also creates a seamless experience when data is shared across different departments or systems. Ultimately, by combining these various techniques, you create a robust environment where your data thrives and supports your organization’s objectives.

Normalizing your data might also incorporate the utilization of tools and platforms designed specifically for data management. These tools often come equipped with features such as automated cleaning processes and standardization templates, streamlining your efforts and ensuring consistency across the board. By embracing technological solutions, you not only save time but also enhance the reliability of your data, making it a powerful asset in your decision-making arsenal.

Handling Missing or Incomplete Data

Not addressing missing or incomplete data can jeopardize the reliability of your analyses and the decisions you base on them. As you research into data management, it’s crucial to confront the reality that data gaps will often occur, whether because of collection errors, system malfunctions, or human oversight. The important thing is how you choose to handle these gaps; after all, acknowledging them can lead to more informed, reliable insights. The strategies you adopt will directly influence the quality of your datasets, thus enhancing the overall integrity of your findings and decisions.

Strategies for Dealing with Missing Data

With a diverse array of strategies to address missing data, you can choose the approach that best aligns with your data’s context and your analysis goals. One of the most straightforward tactics is deletion. This involves removing data entries that contain missing values. While this method is simple, it can lead to biased results if the missingness is not completely random, as it diminishes the effective sample size. Therefore, you should apply this strategy cautiously and only when the amount of missing data is minimal compared to your entire dataset.

Another effective method is to categorize missing data as a separate group that you can analyze independently. This allows you to retain all available records while still gaining insights into the nature of the missingness itself. This approach can provide valuable information about why certain data points are absent, which could indicate deeper issues with the data collection process or the demographic segments involved. By treating missing data as a legitimate category, you may be able to generate new hypotheses or refine your analysis.

Lastly, consider adopting an approach of accumulating external data sources to supplement your gaps. You may find that relevant datasets exist elsewhere that can provide insights into your missing values. This not only enriches your data but enhances your understanding of the context surrounding the missing entries. Collaborating with colleagues, tapping into data libraries, or utilizing online resources can reveal a treasure trove of supplementary information that creates a more comprehensive dataset.

Imputation and Interpolation Techniques

Techniques for dealing with missing data often focus on the imputation and interpolation methods which allow you to estimate and fill in the missing entries. Imputation refers to the statistical process of replacing missing values with substituted ones based on the observed characteristics of the data. This could be done using mean, median, or mode calculations, or more sophisticated methods, such as regression techniques or machine learning algorithms. The choice of imputation method can profoundly affect the accuracy of your analysis, so it’s crucial to select an approach that is not only mathematically sound but also appropriate for your dataset and analytical goals.

Techniques such as linear interpolation, where missing values are estimated based on known data points that precede and follow them, can provide a straightforward solution for time-series data. For more complex patterns or datasets that do not lend themselves to linear trends, you may want to explore nonlinear interpolation methods, enabling a more nuanced understanding of relationships within your data. Importantly, these methodologies not only help clean your dataset but also maintain a sense of continuity and fidelity throughout the analysis.

It’s crucial to remember that while imputation and interpolation techniques can be powerful allies in managing missing data, they are not without risks. Each method introduces some level of uncertainty, and poor imputation choices can lead to misleading analyses. Thus, understanding the underlying reasons for your data gaps, the characteristics of your dataset, and the limitations of your chosen method is critical. Ultimately, striking the right balance between maintaining data quality and deploying effective strategies ensures that your analyses remain robust and trustworthy.

Data Cleansing and Enrichment

All organizations today rely on data to drive decisions, streamline operations, and foster relationships with customers. However, raw data is often filled with inaccuracies, duplicates, and inconsistencies – a situation that can lead to poor insights and misguided strategies. This is where data cleansing comes into play. An effective data cleansing strategy involves various methods and tools that help identify and rectify these issues, ensuring your data is as clean and reliable as possible. Your first step is to understand the common data cleansing methods: standardization, deduplication, error correction, and normalization. Standardization focuses on ensuring that data is formatted consistently across the board, making it easier to analyze. Deduplication removes duplicate records, which can inflate your datasets and lead to skewed results, while error correction focuses on identifying and fixing inaccuracies such as misspellings or incorrect entries. Normalization ensures that datasets follow a uniform structure, which facilitates seamless merging and comparison of data from different sources.

The tools you can employ for data cleansing are numerous and cater to a variety of needs. Consider leveraging software solutions such as OpenRefine or Talend, which offer robust features for identifying and cleaning inconsistencies. Alternatively, many organizations opt for programming languages like Python or R, which provide powerful libraries that can automate data cleansing tasks through scripts and algorithms. These tools make it easier to manage large volumes of data, eliminating manual errors and saving you time. It is crucial to continuously monitor your datasets for quality; this can be accomplished by establishing a routine data review process that detects anomalies and sets standards for input going forward. Ultimately, investing in both the right tools and processes will transform raw data into a valuable asset for your organization.

Additionally, you should always keep in mind that data cleansing is not a one-time activity but rather an ongoing process. Regularly revisiting your datasets and employing a systematic approach will help maintain their quality over time. As new data is generated and existing data evolves, your cleansing protocols must adapt to ensure the utmost reliability. You can think of this practice as housekeeping for your data; just as you wouldn’t let clutter accumulate in your home, you shouldn’t allow flawed data to pile up in your databases.

Data Enrichment Techniques and Best Practices

Cleansing your data is just one part of the broader journey toward achieving high-quality data; data enrichment plays a critical role as well. Data enrichment involves augmenting your existing datasets with additional information, converting your basic data into a goldmine of insights. This could involve integrating third-party data sources, linking datasets across different platforms, or adding contextual attributes that give depth to your existing information. For instance, you might enhance customer records with demographic data, social media profiles, or purchase history, providing you with a fuller picture of your audience. By employing data enrichment techniques, you enable more accurate segmentation, targeted marketing campaigns, and improved decision-making based on comprehensive insights.

Your approach to data enrichment should involve a thoughtful strategy. Start by identifying key data gaps in your current datasets, determining what additional information could provide more value. Subsequently, seek reputable data providers and aim for integration that protects user privacy and complies with regulations. Best practices include verifying the quality of external data sources before augmentation and ensuring consistency across enriched datasets. You should also regularly review the effectiveness of your data enrichment process; this entails monitoring the impact of the newly integrated data on your outcomes and making necessary adjustments as your business context changes. Note, the objective is not simply to add as much data as possible but to ensure that the enrichment adds genuine value to your organization’s objectives.

Another vital consideration is the synergy between cleansing and enrichment efforts. While cleansing addresses the accuracy of the data you possess, enrichment enhances it, providing context and layers that lead to more informed decisions. By combining these two practices, you can create a robust data strategy that transforms your data from a mere collection of facts into powerful insights that drive your business forward. Never underestimate the potential your data carries when it’s both clean and enriched; the quality of your decisions depends heavily on the quality of your data.

Data Governance and Stewardship

Once again, let’s investigate a critical aspect of maintaining high data quality: data governance and stewardship. At the heart of this practice lies establishing robust data governance policies that serve as the backbone of your organization’s data management strategy. These policies are not just mere guidelines; they define how data is collected, stored, processed, and shared within your organization. By crafting comprehensive data governance policies, you create a structured framework that not only promotes transparency but also fosters accountability. This framework ensures that everyone in your organization understands the significance of data quality and the role they play in maintaining it.

Establishing Data Governance Policies

Policies around data governance should cover a range of critical areas, including data ownership, data usage, and compliance with legal and regulatory requirements. When you develop these policies, you’re not only protecting your organization against potential data breaches and compliance risks but also enhancing the reliability of the data you utilize. You see, when data is well-governed, it becomes a valuable asset that can drive insights, support decision-making, and foster innovation. The establishment of these policies should involve input from various stakeholders, ensuring that the framework you create is both applicable and effective across different departments within your organization.

Moreover, the process of developing data governance policies should not be static; it requires regular review and updates to remain relevant in today’s fast-paced data landscape. As your organization evolves, so too will your data governance needs. Whether it’s integrating new data sources, adapting to changes in regulations, or responding to shifts in business strategy, your policies should flex to accommodate new realities. Frequent communication about these changes and providing ongoing training will ensure that your team remains informed and engaged with the governance framework.

Finally, it’s necessary to implement mechanisms for monitoring compliance with these policies. Key performance indicators (KPIs) can help track adherence and assess the overall effectiveness of your data governance efforts. By holding your team accountable, you further cement the importance of clean and reliable data as a fundamental part of your organization’s culture.

Roles and Responsibilities in Data Governance

Policies governing data management also delineate roles and responsibilities across your organization. Effective data governance requires a clear understanding of who is responsible for what. You need to assign specific data stewards—individuals tasked with overseeing data quality within their domains. These stewards act as the go-to contacts for any data-related issues and play a pivotal role in ensuring that data is accurately defined, consistent, and securely maintained. By having clearly defined roles within your governance structure, you create a collaborative environment where responsibilities are understood, and accountability is shared.

Roles within this framework can include executive sponsors, data owners, data custodians, and analytics teams, among others. Each of these roles has a unique contribution to the data governance initiative, which is crucial for success. For instance, executive sponsors are typically responsible for championing governance policies at a strategic level, ensuring that the principles of data quality have buy-in from the highest levels of your organization. Data owners, on the other hand, oversee specific datasets and are charged with enforcing policies related to data accuracy, access, and usage. Custodians manage the technical aspects of your data infrastructure, while analytics teams leverage data governance processes to derive insights that inform decision-making.

Roles in data governance should adapt as your organization grows and technology evolves. Regular assessments and role reviews ensure that everyone remains empowered and equipped to meet the data quality goals you’ve set. By reinforcing these connections and responsibilities, you cultivate a culture of data stewardship that values collaboration and shared goals, ultimately leading to cleaner, more reliable data.

Data Quality Metrics and KPIs

Defining and Tracking Data Quality Metrics

To ensure that your data remains reliable and clean, it’s crucial to define and track appropriate data quality metrics. This begins with understanding the dimensions of data quality — accuracy, completeness, consistency, timeliness, and uniqueness. Each of these dimensions plays a vital role in how you assess the overall quality of your data. As you begin to define metrics for each dimension, you should consider what specific indicators reflect your goals and business needs. For example, establishing a target threshold for accuracy — say, 95% — allows you to measure performance quantitatively and enables you to take actionable steps if you’re lagging behind.

Once your metrics are in place, tracking them becomes an ongoing process that helps you stay on top of any data quality issues that may arise. This requires implementing a robust monitoring system that allows for the regular assessment of your data against the defined metrics. You may use specialized tools or dashboards to visualize your data quality and track performance over time. As you closely monitor these metrics, you’ll gain insights into patterns or recurring issues, allowing you to respond swiftly and strategically to prevent data decay. Do not forget, effective tracking not only highlights problems but also showcases improvements when adjustments are made.

Lastly, don’t forget the importance of cross-department collaboration when defining and tracking these metrics. Engage various departments to gather insights about what data is most critical to their operations. This will help ensure that the metrics you define are relevant to the whole organization, rather than being siloed within a single perspective. You want to create a culture of accountability around data quality, where everyone in your organization understands its significance and how it affects your goals. By fostering this collaborative environment, you’ll be in a stronger position to maintain the integrity of your data over time.

Using Data Quality KPIs to Drive Improvement

KPIs, or Key Performance Indicators, are crucial tools that allow you to assess the effectiveness of your data quality initiatives and drive continuous improvement. By setting specific, measurable, and realistic KPIs, you lay the groundwork for a data quality program that doesn’t just react to problems but proactively seeks to enhance the health of your data. For example, you might set a KPI that aims for a reduction in duplicate records by 25% over a quarter. Such clear targets provide a focus for your efforts and help your team understand exactly what needs to be accomplished.

Utilizing data quality KPIs helps determine the success of your data management strategies by allowing you to evaluate both the current state of your data and the impact of any changes you implement. This means that rather than merely tracking whether your systems are functioning correctly, you can pinpoint how improvements translate into actual business benefits — such as enhanced decision-making capabilities or reduced operational costs. Establishing a feedback loop, where you regularly review these KPIs, allows you to respond to your findings, whether that involves scaling successful practices or addressing areas still requiring attention. This iterative process is at the heart of fostering a culture that prioritizes clean and reliable data.

For instance, if you notice that one specific data entry source consistently leads to higher error rates than others, it may prompt you to investigate the underlying causes. Perhaps this source lacks sufficient validation mechanisms or is used by individuals unfamiliar with data entry protocols. Armed with this insight, you can implement targeted training for users or adjust the entry forms to include checks that mitigate errors. In this way, using KPIs not only informs you of where you stand but also provides a pathway for systematic improvement, ensuring your organization continuously raises the bar on the quality of its data assets.

Implementing Data Quality Controls

Unlike a casual stroll through a park, ensuring data quality requires a more deliberate approach, particularly when it comes to creating systems and processes that support clean and reliable data. One crucial tactic for effective data management is the integration of structured checklists and routine audits into your data quality controls. By developing a comprehensive data quality checklist, you can establish a clear framework of standards and criteria that your data must meet before it is deemed acceptable. This checklist should cover various aspects, including accuracy, completeness, consistency, and timeliness. By having these criteria in place, you can proactively identify and rectify data quality issues before they escalate and impact your decision-making processes.

Data Quality Checklists and Audits

Checklists are powerful tools that can streamline your data quality efforts. Imagine walking into an inspection room equipped with a checklist that reviews every critical component of your data. You can methodically assess your datasets against predetermined thresholds, providing a systematic way to gauge the health of your data. Moreover, engaging in regular audits not only helps in identifying discrepancies but also fosters a culture of excellence within your organization. Everyone involved in data entry or management becomes aware of the standards and is more likely to take their roles seriously, contributing to a higher overall data quality.

By conducting periodic audits, you can uncover hidden issues that might not be visible on a day-to-day basis. These audits should not be one-off events but rather part of an ongoing strategy to ensure your data is consistently monitored and improved. You can employ both internal and external resources to conduct these assessments, allowing for an unbiased evaluation of data quality. By integrating audit findings back into your processes, you create a feedback loop that not only rectifies past mistakes but also informs future practices, ensuring continuous improvement.

As you implement effective data quality checklists and audits, remember to engage your team and foster dialogues around data integrity. Encourage cross-functional collaboration where teams can voice concerns, share insights, and collaborate on solutions. This inclusive approach amplifies accountability and stewardship over data, ultimately leading to a more robust understanding and enhancement of data quality across your organization’s ecosystem.

Continuous Monitoring and Improvement

Monitoring is the backbone of data quality management as it allows you to maintain a vigilant eye over your datasets. Continuous monitoring involves establishing automated systems that periodically evaluate your data for issues such as duplication, inaccuracies, or inconsistencies. Utilizing data quality tools can facilitate this process, enabling you to address problems in real-time rather than waiting for a scheduled audit. When you actively monitor your data, you transform from a reactive stance to a proactive culture focusing on immediate errors, thereby increasing trust in the information used for key decisions.

Beyond just identifying issues, continuous monitoring provides insightful analytics that can guide your improvement strategies. By analyzing trends over time, you can detect systemic problems that may require more comprehensive solutions, such as training your staff on data input methods or revising data collection procedures. Moreover, it allows you to measure the effectiveness of any changes you’ve made, ensuring that your iterations are leading to tangible improvements in data quality. Effective feedback loops from continuous monitoring can help refine processes and establish best practices that can be replicated across departments, driving a holistic improvement in data governance.

Implementing continuous monitoring as a foundational element of your data quality strategy not only promotes reliability but aligns with the dynamic nature of your organization. As you iterate and improve, you can remain responsive to the changing needs of your business and your clients, ensuring that your data remains not just current but also contextually relevant. The commitment to ongoing monitoring creates a culture where quality is everyone’s responsibility, thereby nurturing a long-term investment in your organization’s future.

Best Practices for Data Entry and Collection

Despite the advancements in data analytics and the growing emphasis on data-driven decision-making, the importance of clean and reliable data cannot be overstated. The foundation of high-quality data lies in how it is collected and entered. Implementing robust data entry processes is crucial. You need to be meticulous when designing forms and interfaces to minimize errors and enhance accuracy. A well-thought-out design not only streamlines the data collection process but also ensures that data integrity is upheld. This involves creating user-friendly interfaces that guide data collectors through intuitive workflows, making it easier for them to enter information correctly.

Designing Data Entry Forms and Interfaces

Data entry forms are often the first point of interaction for users providing input, so their design should prioritize simplicity and clarity. You should incorporate elements such as drop-down lists, checkboxes, and pre-filled fields wherever possible. These elements reduce typing errors and speed up the data collection process. It’s important to think critically about the order in which you present fields—grouping related information together and using logical sequences can make the form not only easier to navigate but also decrease the likelihood of mistakes. Keeping the interface clean and free from visual clutter helps you and your team stay focused on what matters: accurate data entry.

Moreover, you can enhance the effectiveness of your data entry forms by providing clear instructions and contextual help. Inline hints and tooltips can guide users through obscure areas, ensuring that they understand what is required for each field. You might even consider implementing validation rules that prevent users from submitting incomplete or inconsistent information. For instance, if a certain field mandates numeric input, having a built-in check will save you from headaches later on as you try to clean and standardize your data sets. Think of your data entry forms as a crucial step in the data quality pipeline; their effective design can lead to better outcomes downstream.

Your commitment to high-quality data won’t go unnoticed. By designing thoughtful data entry forms and interfaces, you lay down the initial framework for the integrity of your data. Not only does this benefit you in the short term, but it also cultivates a culture of data accuracy within your organization, encouraging everyone to take ownership of data quality.

Training and Guidelines for Data Collectors

Forms and procedures are only as good as the people who use them. To ensure that your data collection efforts yield reliable results, it’s crucial to establish comprehensive training programs and clear guidelines for data collectors. Start by outlining the importance of data quality to your team. When data collectors understand the impact of their work, they’re likely to be more invested in accuracy. You should provide targeted training that covers not only the technical aspects of data entry but also the reasoning behind the best practices you wish to implement. This includes the significance of consistency, accuracy, and attention to detail during the data entry process.

Practices like regular check-ins and refreshers can greatly benefit your data collectors. You might even want to create easy-to-follow reference materials, such as quick guides or checklists, that your team can refer to during data collection. Building a robust onboarding process for new data collectors establishes a strong foundation, while ongoing education reinforces accountability and highlights the evolving nature of data management techniques. Remember that establishing a culture of continuous improvement should reflect on your team’s efforts to maintain clean and reliable data.

Data Quality in Big Data and Analytics

After stepping into big data, you soon realize that the vast amounts of information generated daily can be both a treasure trove and a quagmire. Analytics in this landscape presents unique challenges due to the sheer volume, velocity, and variety of data. When data is multiplied across various platforms, sources, and formats—everything from structured databases to unstructured social media feeds—ensuring its quality becomes a daunting task. You might encounter issues such as data duplication, inconsistencies, and inaccuracies that can obscure insights and render your analysis unreliable. Moreover, the rapid pace at which data is generated can outstrip your ability to clean and validate it effectively, leading to decisions based on flawed information. It’s a paradox: the very power of big data that can drive innovation can also lead to misguided strategies if the underlying data quality is not meticulously maintained.

The complications don’t stop there; the integration of disparate data sources can create further challenges. Each data stream may possess its own set of rules, formats, and quality standards, making it difficult for you to develop a unified view of the information. For example, merging customer data from a CRM system with behavioral data from a website may lead to discrepancies if one dataset is outdated or misaligned with the current information you are analyzing. Errors in data entry, lack of standardization, and the influence of human biases can compound these issues, resulting in what is known as “garbage in, garbage out.” Thus, the clarity and reliability of your analytics are intricately tied to the cleansing and validation of your datasets before any meaningful conclusions can be drawn.

Finally, as you explore the ethical implications surrounding data quality in analytics, you might find yourself at a crossroads. With increasing scrutiny on data privacy laws and regulations, the need for high-quality data not only supports effective decision-making but also fosters trust with consumers. If your datasets are riddled with inaccuracies or biases, the implications can extend beyond poor analytics to potential violations of data integrity. The stakes are high, and you must navigate this complex landscape with diligence to shield your organization from reputational damage and legal repercussions. Emphasizing data quality is not just about improving your analytics; it’s about cultivating a responsible approach to data usage that respects the rights of individuals and the integrity of decision-making processes.

Unique Challenges in Big Data and Analytics

Analytics in big data can feel like trying to find a needle in a haystack. With copious amounts of information at your disposal, the challenge lies in identifying what data is truly relevant for your analysis. High volumes of irrelevant or redundant data can lead to analysis paralysis, a condition where data overload inhibits effective decision-making. The lack of recognizable patterns within vast datasets may throw you off course, creating a fog of confusion that can obscure valuable insights. As trends evolve and new data streams emerge, what was once reliable may rapidly become obsolete, making it important for you to stay agile and adapt your data quality processes in real time.

Furthermore, the velocity of big data presents its own unique hurdle. Real-time data processing is often critical for timely decision-making, but the faster data flows in, the more challenging it becomes to apply rigorous quality checks. You may find yourself caught in a perpetual race, trying to keep up with data ingestion while simultaneously ensuring that it meets your quality standards. This urgency may lead to oversights, inadvertently introducing poor-quality data into your analyses that can skew results and undermine confidence in your conclusions. The dynamic nature of big data demands that you develop a system that balances speed with precision, allowing you to act on real-time insights without compromising the reliability of your analysis.

Strategies for Ensuring Data Quality in Big Data Environments

Unique to big data environments, effective strategies for ensuring data quality involve a multi-faceted approach. First and foremost, establishing clear data governance protocols is important. Define data ownership, roles, and accountability across your organization to create an environment where everyone understands the importance of maintaining data integrity. Next, implement automated data validation processes that can detect anomalies or duplicates as data is ingested. This not only helps in maintaining data quality but also saves precious time, allowing your teams to focus on interpreting insights rather than spending excessive hours cleaning data.

Plus, consider adopting advanced analytics tools that employ machine learning algorithms to identify patterns and predict potential data quality issues. These tools can help flag discrepancies and guide your decisions proactively rather than retroactively. Collaboration is also key; work closely with cross-functional teams to establish shared data standards that unify how information is processed, ensuring that quality is a shared responsibility throughout your organization. In this age of big data, integrating quality checks into every stage of the data lifecycle is not just advantageous—it’s important for robust and credible analytics.

Data Quality Tools and Technologies

Now, let’s examine into data quality tools and technologies that are pivotal in achieving your data quality objectives. In today’s fast-paced digital environment, organizations face the compounding challenge of managing vast amounts of data, all while ensuring that this data remains accurate, complete, and consistent. An array of software solutions is available to help you tackle these challenges, allowing you to identify and rectify data quality issues before they escalate into significant problems. These tools range from simple data cleansing applications to comprehensive data governance platforms that integrate various functionalities such as data profiling, validation, enrichment, and monitoring. By leveraging these technologies, you can significantly reduce data discrepancies, ultimately leading to more reliable and actionable insights.

Overview of the available data quality solutions often reveals an array of capabilities tailored to different business needs. These tools typically incorporate features such as automated data cleansing, which can help you detect and eliminate incorrect entries; data profiling to assess data quality; and data enrichment services that enhance existing data with additional relevant information. Furthermore, many modern data quality solutions support advanced analytics and machine learning algorithms that empower you to predict data issues and automate corrective actions. Whether you are part of a small business or a large corporation, investing in the right data quality software can be a game changer, ensuring that your data ecosystem remains healthy and robust.

Ultimately, as you navigate this landscape of data quality tools and technologies, you will also find that usability and integration capabilities are key factors in their effectiveness. Look for platforms that easily incorporate with your existing data systems and areuser-friendly, enabling your team to quickly adapt to new workflows. This minimizes disruption while maximizing the potential for improved data quality outcomes. As you explore your options, consider how these tools can not only enhance data accuracy but also foster a culture of data stewardship within your organization, enabling everyone to take ownership of the quality of the data they handle.

Evaluating and Selecting Data Quality Tools

Technologies designed to ensure data quality are abundant, but how do you navigate through them to find the tools that best fit your organization’s needs? Evaluating and selecting data quality tools involves several key considerations that can mean the difference between successful implementation and a futile endeavor. Start by assessing the specific data quality challenges your organization faces. Are you dealing with missing data, duplicates, or inconsistencies? Identifying these pain points will help you narrow your options effectively. Moreover, it’s crucial to evaluate the scalability of the tool—consider whether it can grow alongside your data requirements as your organization evolves.

Additionally, take a close look at the features that different tools offer. Some tools may excel in data profiling and monitoring, while others may focus more on data cleansing or enrichment capabilities. Pay attention to user reviews and case studies as these can provide insightful information on how well the tool performs in real-world scenarios. Another aspect to consider is the availability of support and training options the vendor offers. A responsive support team can significantly reduce the learning curve and ensure a smoother implementation process.

Data quality tool selection is not just a one-time decision; it requires ongoing assessment and adjustment. Your organization may evolve, requiring different functionalities over time. Regularly re-evaluate your tools to ensure they still meet your data quality standards and address any new challenges that arise. By staying proactive and responsive, you empower your organization to maintain a robust data quality framework that can adapt to changing data landscapes, ensuring that you always have clean and reliable data at your fingertips.

Overcoming Common Data Quality Challenges

Addressing Resistance to Change

Challenges in data quality often stem from an inherent resistance to change within organizations. When you introduce new processes, tools, or systems aimed at improving data quality, you may encounter pushback from team members who are accustomed to the existing methods of operation. This resistance can manifest in many forms—skepticism towards new software, reluctance to adopt new practices, or even outright refusal to acknowledge that a problem exists. To overcome this barrier, it’s vital to start with strong leadership and a clear communication strategy. Educate your team about the importance of data quality and how it directly impacts their work as well as the overall success of the organization. When your employees understand the ‘why’ behind the changes, they’re more likely to engage positively with new initiatives.

Incorporate training and resources that empower your staff. Providing training sessions that outline the benefits of improved data practices not only breaks down the resistance but also fosters a culture of accountability and continuous improvement. You might even consider forming cross-departmental teams to tackle data quality issues. This collaborative approach not only reduces silos but also enables diverse perspectives that can enrich the conversation around data quality. When team members see their colleagues invested in new processes, they may be more likely to follow suit and participate fully in the transformation process.

Finally, recognize and celebrate small wins throughout this journey. Change is challenging, and acknowledging the progress made can motivate your team to keep moving forward. By recognizing contributions to data quality improvements, you create an environment that values initiative and fosters adaptability. This ongoing reinforcement will transform your workplace culture, gradually diminishing resistance and making room for innovation and growth in data practices.

Managing Data Quality in Distributed Environments

Any organization that operates in a distributed environment faces a unique set of challenges when it comes to maintaining data quality. Teams working remotely or across various geographical locations can lead to inconsistencies in data entry, interpretation, and usage. You may find that different departments utilize different software, or worse, use varying definitions for the same data fields. This fragmentation can cause significant issues down the line, leading to unreliable insights and decisions based on flawed data. To combat this, a unified approach to data governance is vital. Establish standard protocols that offer clear guidelines for data collection, storage, and sharing across all locations. This consistency enables everyone to work from the same playbook, significantly enhancing data integrity.

Another tactic you can employ is leveraging technology to bridge the gaps inherent in distributed environments. Implementing cloud-based data management tools can standardize processes and facilitate real-time collaboration among teams. Cloud solutions can provide a centralized repository where all data can be accessed, modified, and updated by team members, regardless of their location. Furthermore, investing in quality control measures, such as automated data validation processes, can help detect and rectify discrepancies quickly. Such tools can alert you to issues as they arise, enhancing your ability to respond and uphold data quality continuously.

To solidify these practices, regular audits and data quality assessments are advisable. These practices not only help to identify areas of concern but also reinforce a shared understanding of the importance of data quality among teams dispersed across locations. Facilitate periodic meetings where team members can convene to discuss their experiences and share best practices on maintaining data quality. This ongoing dialogue encourages a culture of collaboration and accountability, vital for managing data quality effectively in distributed environments.

Conclusion

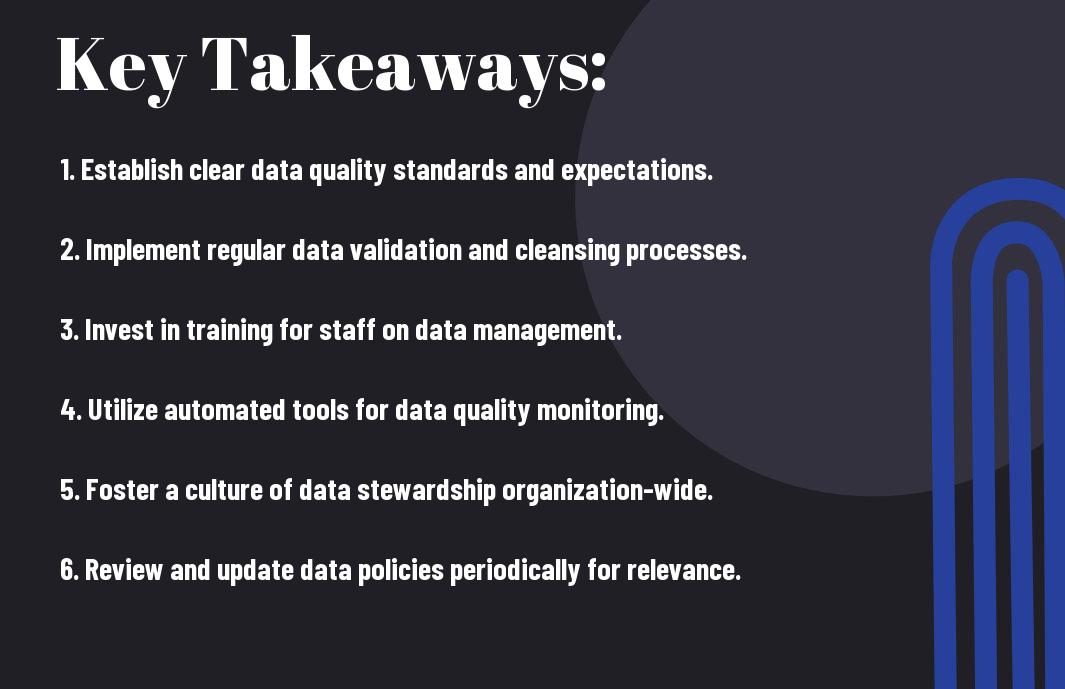

Ultimately, ensuring clean and reliable data is not just a technical requirement; it’s a strategic asset that can significantly impact your decision-making and operational efficiency. As you’ve learned through various best practices, from implementing data governance frameworks to fostering a culture of accountability within your organization, the commitment to data quality is a multifaceted endeavor. By nurturing an environment where data integrity is prioritized, you empower your teams to harness the full potential of analytics, which can lead to more informed decisions and enhanced business performance. It’s important to recognize that data quality is not a one-time effort but a continuous journey that requires regular assessment and adaptation.

Your role in this journey is crucial, as you are the linchpin connecting your organization’s strategies with the underlying data processes. By adopting best practices such as regular data audits, user training, and leveraging automated tools for data cleansing, you position your organization to respond to challenges more effectively. Remember that investing in data quality today will yield dividends tomorrow, creating a robust foundation that supports your overall business objectives. Don’t underestimate the importance of fostering a culture that values data accuracy; after all, it is only through clean data that your insights can drive real change.

Finally, for a deeper explore effective data quality strategies, consider exploring resources such as the Data Quality: 7 Key Issues & Best Practices to Avoid. Embracing these practices will not only prepare you to encounter the complexities of today’s data landscape but also empower you to leverage clean data as a strategic advantage. In an era where information is more abundant than ever, your ability to distill that information into actionable insights through quality data can set you apart from the competition. Investing in data quality management is investing in your organization’s future success, and the time to act is now.

FAQ

Q: What is data quality and why is it important?

A: Data quality refers to the condition of a dataset, which encompasses aspects such as accuracy, completeness, consistency, reliability, and timeliness. It is important because clean and reliable data is vital for informed decision-making, improving business operations, and enhancing customer experiences. Poor data quality can lead to erroneous conclusions, increased costs, and damage to a company’s reputation.

Q: What are the common best practices for ensuring data quality?

A: Some best practices for ensuring data quality include: establishing clear data governance policies, implementing data validation techniques, regularly auditing and cleansing data, training staff on data entry and maintenance, and utilizing data management tools. These practices help maintain the integrity of data throughout its lifecycle.

Q: How can organizations evaluate the quality of their existing data?

A: Organizations can evaluate the quality of their existing data by performing data profiling, which involves analyzing data sets to identify anomalies, missing values, and inconsistencies. They can use metrics such as accuracy rates, completeness scores, and timeliness assessments. Regularly scheduled audits and data quality assessments can also provide insights into areas that need improvement.

Q: What role does technology play in data quality management?

A: Technology plays a vital role in data quality management by providing tools that automate data cleansing, validation, and monitoring processes. Data quality tools can help identify duplicates, enforce data integrity rules, and manage data entry workflows. Additionally, these tools facilitate real-time data quality checks and reporting, allowing organizations to maintain high-quality standards continuously.

Q: How can organizations build a culture of data quality within their teams?

A: Organizations can build a culture of data quality by prioritizing data quality initiatives in their business strategies, providing training and resources for employees on best practices, and promoting accountability for data management among all team members. Encouraging collaboration across departments and highlighting the value of high-quality data in decision-making can also foster a culture where data quality is seen as a shared responsibility.