Analysis can seem daunting, but fear not! In this guide, you will uncover imperative tips to kickstart your journey into the world of data analysis. Whether deciphering data sets or navigating statistical tools, this beginner’s resource will pave the way for your data analysis success. For a more in-depth exploration, check out our article on Demystifying Data Analytics: A Beginner’s Guide.

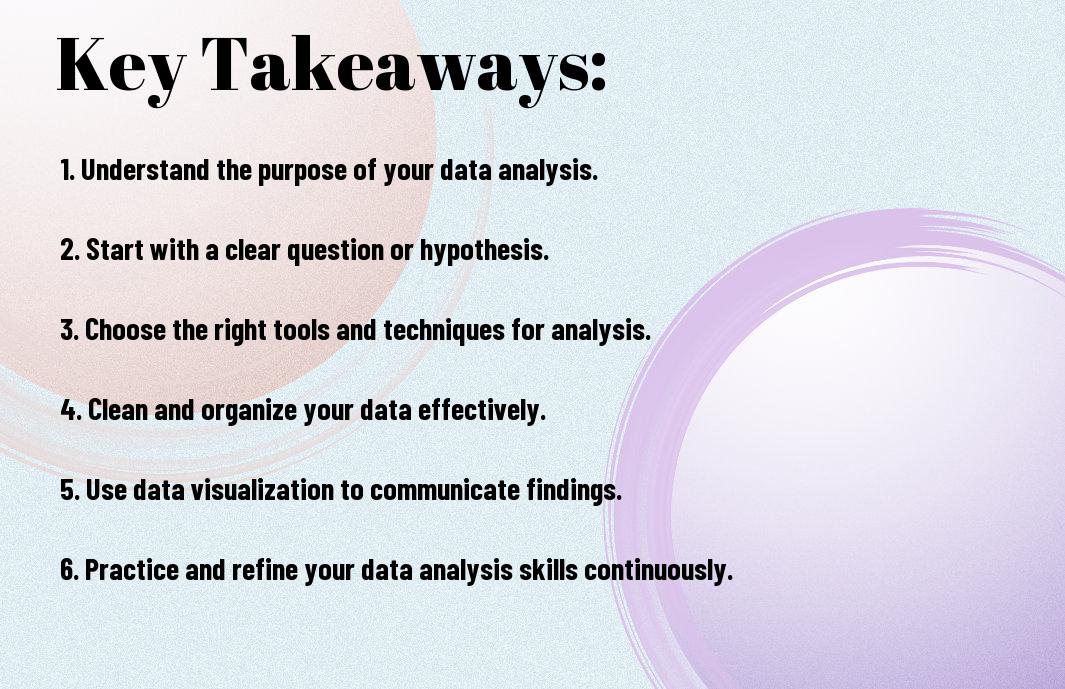

Key Takeaways:

- Understand the Basics: Before entering into data analysis, grasp the fundamental concepts and tools.

- Define Your Goals: Clearly outline what you want to achieve with your analysis to stay focused.

- Clean and Prepare Data: Ensure your data is accurate and organized before starting the analysis process.

- Choose the Right Tools: Select the appropriate software or programming language that aligns with your goals and skill level.

- Visualize Your Data: Utilize charts, graphs, and other visuals to help understand and communicate your findings effectively.

- Practice Makes Perfect: Commit to regular practice and experimentation to improve your data analysis skills over time.

- Seek Feedback and Learn from Others: Don’t hesitate to ask for feedback from peers or mentors to enhance your analysis techniques.

Setting the Stage

What is Data Analysis?

The world of data analysis can seem intimidating at first, but fear not! Data analysis is the process of inspecting, cleaning, transforming, and modeling data to discover useful information, inform conclusions, and support decision-making. At its core, data analysis is about extracting insights from raw data and presenting it in a meaningful way. In today’s data-driven world, the ability to analyze data effectively is a highly valuable skill.

When you commence on your journey in data analysis, you will encounter various techniques and tools such as statistical analysis, data mining, and machine learning. These tools help you uncover patterns, relationships, and trends in the data that might not be immediately apparent. By harnessing the power of data analysis, you can make informed decisions, solve complex problems, and even predict future outcomes based on historical data.

As a beginner in data analysis, it’s necessary to familiarize yourself with common data analysis techniques and start practicing on real-world datasets. By honing your skills and gaining hands-on experience, you will become more comfortable and confident in your ability to analyze data effectively.

Why is Data Analysis Important?

Setting the stage for data analysis is crucial because it allows you to unlock valuable insights that can drive strategic decisions and improve business performance. In today’s hyper-competitive business landscape, organizations that can leverage data to their advantage have a significant edge over their competitors. By harnessing the power of data analysis, you can gain a deeper understanding of your customers, market trends, and operational efficiency.

Effective Analysis of data can help you identify bottlenecks in your processes, optimize your marketing campaigns, and improve customer satisfaction. By using data analysis to inform your decisions, you can minimize risks, seize opportunities, and steer your organization towards success. So, whether you’re a business professional, a researcher, or a student, mastering the art of data analysis is a valuable skill that will open up a world of possibilities for you.

Preparing for Data Analysis

There’s a lot to consider when preparing for data analysis. This stage is crucial as it sets the foundation for the entire process. Gathering Data is the first step in data analysis. This involves collecting all relevant information that you will use to gain insights and draw conclusions. Whether you gather your data from surveys, databases, or online sources, it’s imperative to ensure that the data is accurate, relevant, and sufficient for your analysis.

Gathering Data

Data gathering can be done through primary sources like surveys and interviews or secondary sources such as databases and research articles. It is important to evaluate the credibility and reliability of the data sources to ensure the accuracy of your analysis. Organizing the data in a systematic way will make it easier for you to analyze and interpret the information effectively.

Cleaning and Organizing Data

With the data gathered, the next step is cleaning and organizing it. This process involves removing any irrelevant or duplicate data, correcting errors, and structuring the data in a format that is suitable for analysis. By cleaning and organizing your data properly, you can avoid errors and inconsistencies that may affect the accuracy of your results.

To ensure that your analysis is based on reliable and accurate data, it is imperative to invest time and effort in cleaning and organizing your data. Consistent formatting, removing outliers, and handling missing values are some of the key aspects to focus on during this stage. Be mindful of, the quality of your analysis depends heavily on the quality of your data.

Tools and Software for Data Analysis

An important aspect of data analysis is having the right tools and software to work with. There is a wide range of tools available that can help you analyze and visualize your data effectively. From programming languages like Python and R to software applications like Excel and Tableau, choosing the right tools that suit your needs and skill level is imperative for a successful data analysis process.

Software applications like Excel are user-friendly and widely used for data analysis tasks, whereas programming languages like Python offer more advanced capabilities for handling complex data sets. Depending on your level of expertise and the complexity of your analysis, you can choose the tool that best fits your requirements. Learning to use these tools effectively will enhance your data analysis skills and enable you to derive meaningful insights from your data.

Descriptive Statistics

All beginners in data analysis need to understand descriptive statistics, which summarize and organize data to make it more understandable. There are two main components to descriptive statistics: measures of central tendency and measures of variability.

Measures of Central Tendency

Any analysis starts with measures of central tendency, which help you understand the center of your data. The most common measures of central tendency are the mean, median, and mode. The mean is the average of all data points, the median is the middle value when data is ordered, and the mode is the most frequent value. Each measure provides valuable insight into your dataset, giving you a clearer picture of the typical or central value.

Calculating these measures accurately ensures that you have a comprehensive understanding of your data’s central tendencies, guiding you in making informed decisions based on your analysis.

Measures of Variability

With measures of variability, you investigate deeper into understanding the spread or dispersion of your data points. Common measures of variability include range, variance, and standard deviation. The range gives you the difference between the highest and lowest values, while variance and standard deviation measure the average distance of each data point from the mean. These metrics help you quantify how diverse or similar your data points are.

Understanding the variability in your data is crucial as it highlights the degree of consistency or variation in your dataset, allowing you to identify outliers and patterns that can influence your analysis outcomes.

It is important to pay attention to measures of variability to grasp the full picture of your data distribution. Variability metrics complement central tendency measures by providing a comprehensive view of the data’s distribution and dispersion. By combining both central tendency and variability measures, you gain a more robust understanding of your dataset, enhancing the accuracy and reliability of your analysis results.

Data Visualization

Descriptive data visualization is another crucial aspect of data analysis that helps you interpret and communicate your findings effectively. Charts, graphs, and plots are powerful tools to visualize patterns, trends, and relationships within your data. Common types of data visualization include histograms, scatter plots, and box plots, each suitable for different types of data analysis.

Visualizing your data not only makes it easier for you to understand but also enables you to convey your insights to others more efficiently. It is a crucial skill for every data analyst to master, as it enhances the clarity and impact of your analysis.

Another

Concerning data visualization, remember that selecting the appropriate type of visualization for your data is important. Different visualizations are suited to different data types and varying objectives. By choosing the right visualization technique, you can effectively showcase patterns, trends, and anomalies in your data, making your analysis more engaging and insightful.

Data Exploration

Your journey into the world of data analysis begins with data exploration. This phase involves getting to know your dataset inside out, understanding its components, structure, and relationships. By delving deep into your data, you can uncover insights that will guide your analytical processes and decision-making. Here are some important techniques for effective data exploration.

Identifying Patterns and Trends

To make sense of your data, you need to identify patterns and trends within it. This involves looking for recurring themes, relationships, or anomalies that can provide valuable insights. By visualizing your data through charts, graphs, and plots, you can easily spot trends over time, patterns in distribution, or correlations between variables. This step is crucial for gaining a preliminary understanding of your data before stepping into more complex analyses.

Patterns and trends can offer valuable insights into consumer behavior, market trends, or operational inefficiencies. By identifying and understanding these patterns, you can make informed decisions and predictions that drive your business forward. Note, data exploration is not just about crunching numbers; it’s about telling a story through your data.

Correlation and Causation

When exploring your data, it’s important to distinguish between correlation and causation. Correlation refers to a relationship between two variables, where a change in one is associated with a change in the other. Causation, on the other hand, implies that one variable directly causes a change in another. Understanding the difference is crucial to avoid making erroneous assumptions or drawing misleading conclusions.

With proper analysis techniques, you can determine whether a correlation between variables is statistically significant or merely a coincidence. Note, just because two variables are correlated does not mean that one causes the other. Dig deeper into the data, consider external factors, and use statistical tests to establish causation conclusively.

Handling Missing Data

Dealing with missing data is a common challenge in data analysis. Handling missing data is crucial to ensure the accuracy and reliability of your analyses. There are various techniques for addressing missing data, including imputation, deletion, or using predictive models to fill in the gaps. Each method has its own advantages and limitations, so it’s important to choose the most suitable approach based on the nature of your data and analysis goals.

It’s important to note that ignoring missing data or applying inappropriate techniques can introduce bias and errors into your analysis, leading to inaccurate results and flawed conclusions. By carefully handling missing data, you can ensure that your analysis is robust and trustworthy, providing meaningful insights to guide your decision-making process.

Inferential Statistics

Keep in mind that inferential statistics is all about making predictions and inferences about a population based on a sample of data. This statistical approach helps you draw conclusions and make generalizations about the larger group from which the sample was taken. It involves techniques like hypothesis testing, confidence intervals, and significance testing.

Hypothesis Testing

One important concept in inferential statistics is hypothesis testing. This involves formulating a hypothesis about a population parameter and using sample data to determine if there is enough evidence to reject the null hypothesis. By setting up a null hypothesis (often denoted as H0) and an alternative hypothesis (denoted as Ha), you can statistically test your assumptions and make informed decisions based on the results.

Any hypothesis test involves calculating a test statistic, determining a significance level, and comparing the test statistic to a critical value from the appropriate statistical distribution. If the test statistic falls into the rejection region, you can reject the null hypothesis in favor of the alternative hypothesis. Hypothesis testing allows you to assess the likelihood of your sample results occurring by chance and provides a systematic way to make decisions based on data.

Confidence Intervals

One of the most valuable tools in inferential statistics is confidence intervals. These intervals provide a range of values within which you can be confident that the true population parameter lies. Usually expressed with a margin of error, confidence intervals help quantify the uncertainty in your estimation and provide valuable insight into the precision of your sample data.

Intervals can be interpreted as a measure of how much you should trust your sample statistics as estimates of the population parameters. The wider the interval, the less precise your estimate, while a narrower interval indicates a more precise estimation. Confidence intervals are crucial for drawing meaningful conclusions from your data and understanding the reliability of your results.

Significance Testing

An important part of hypothesis testing is significance testing. This method helps you determine the likelihood of obtaining results as extreme as the ones observed in your sample, assuming the null hypothesis is true. By comparing the p-value of your test statistic to the significance level (often set at 0.05), you can assess whether the results are statistically significant or occurred by random chance.

An understanding of significance testing allows you to make informed decisions about the data and draw meaningful conclusions about the population based on your sample. By applying statistical tests and interpreting the results correctly, you can confidently communicate your findings and make data-driven decisions in various fields of study.

Working with Data Types

To demystify data analysis, it is crucial to understand how to work with different data types. Whether you are dealing with numerical data, categorical data, or text data, each type requires a unique approach to analysis. By mastering the nuances of each data type, you can uncover valuable insights that drive informed decision-making.

Numerical Data

For numerical data, which consists of quantitative values that can be measured and compared mathematically, it is crucial to understand the distribution and summary statistics such as mean, median, and standard deviation. By visualizing numerical data through histograms or box plots, you can gain a deeper understanding of the data’s central tendencies and variability. Additionally, identifying outliers can help you detect errors or anomalies in the data.

Categorical Data

Data categorization is a common occurrence in datasets, leading to the presence of categorical data. This type of data represents qualitative variables that are typically non-numeric in nature. When working with categorical data, it is crucial to encode the variables appropriately to ensure accurate analysis. Frequency tables and bar charts can be used to visualize categorical data and uncover patterns or relationships within the data.

A key consideration when working with categorical data is the distinction between nominal and ordinal variables. Nominal variables have no inherent order, while ordinal variables have a defined order or ranking. Understanding this difference is crucial for interpreting and analyzing categorical data effectively.

Text Data

One challenge in data analysis is dealing with unstructured text data, which requires specialized techniques for processing and extracting insights. When working with text data, natural language processing (NLP) tools and techniques can help you tokenize the text, remove stop words, and perform sentiment analysis. By leveraging NLP libraries such as NLTK or spaCy, you can uncover valuable information from text data, such as word frequencies or topic modeling.

To effectively analyze text data, it is crucial to consider the context and semantics of the text. Understanding the relationships between words and phrases can help you extract meaningful insights and trends from unstructured text data. By incorporating text analysis techniques into your data analysis workflow, you can unlock the potential of textual data for various applications, such as customer feedback analysis or social media sentiment analysis.

Assume that by mastering the nuances of numerical, categorical, and text data analysis, you can enhance your data analysis skills and make more informed decisions based on the insights derived from diverse data types.

Data Visualization Best Practices

Choosing the Right Chart

Not all charts are created equal when it comes to visualizing data. The best way to represent your data depends on the type of information you want to convey. Bar charts are great for comparing categories, line charts work well for showing trends over time, and pie charts can effectively display proportions. Make sure to choose a chart that best represents your data accurately and clearly to avoid confusion.

When selecting a chart, consider the audience you are presenting to and the message you want to convey. Avoid cluttering your visualization with unnecessary elements that can distract from the main point. Do not forget, the best data visualizations are simple, clear, and easy to interpret at a glance.

Experiment with different chart types to see which one best conveys your message. Don’t be afraid to try out new styles and formats to see what works best for your data analysis needs.

Avoiding Misleading Visualizations

The best data visualizations are truthful and accurately represent the data they are based on. Avoid misleading visualizations that manipulate the data or scales to convey a false narrative. Data can be easily distorted through misleading visualizations, leading to incorrect conclusions and decisions based on false information.

It is imperative to critically evaluate your visualizations to ensure they are not inadvertently misleading. Pay attention to the scale, axes, and labeling to prevent any misinterpretation of the data. Visualization should aim to provide a clear and honest representation of the data to facilitate understanding and analysis.

By being mindful of the potential for misleading visualizations, you can maintain the integrity of your data analysis and effectively communicate your findings. Do not forget, transparency and accuracy are key elements of good data visualization practices.

Effective Use of Color

Choosing the right colors for your data visualization is crucial for conveying information effectively. Choosing a color scheme that is visually appealing and easy to interpret can enhance the impact of your visualization. Use strong contrasting colors to distinguish between different data categories and highlight important points.

Consider color blindness when selecting colors for your visualization to ensure accessibility for all viewers. Effective use of color can help draw attention to key insights and trends within the data, making your visualization more engaging and informative.

Experiment with different color combinations and palettes to find the best option for your data visualization. Do not forget, the best use of color should enhance the clarity and understanding of your data, not detract from it.

Common Data Analysis Mistakes

Confirmation Bias

For beginners in data analysis, confirmation bias is a significant pitfall to avoid. This bias occurs when you unconsciously favor information that confirms your preconceptions or hypotheses while ignoring contradictory data. As a beginner, you may unintentionally seek out evidence that supports your initial assumptions, leading to skewed results and inaccurate conclusions. To overcome confirmation bias, make a conscious effort to challenge your own beliefs and actively seek out contradictory evidence.

An important strategy to combat confirmation bias is to involve others in your data analysis process. By collaborating with peers or seeking feedback from mentors, you can gain valuable perspectives that may uncover biases or assumptions you overlooked. Additionally, it’s crucial to document your analysis steps thoroughly and be transparent about your methods to encourage accountability and prevent confirmation bias from influencing your findings.

Bear in mind, confirmation bias can hinder your ability to make objective decisions based on data. By remaining open-minded, questioning assumptions, and actively seeking out opposing viewpoints, you can minimize this bias and enhance the accuracy and reliability of your data analysis.

Overfitting

On a related note, overfitting is another common mistake that beginners in data analysis should be wary of. This phenomenon occurs when a model fits the training data too closely, capturing noise or random fluctuations that do not reflect the true underlying patterns. While an overfitted model may perform well on the training data, it is likely to generalize poorly to new, unseen data.

This can lead to misleading conclusions and ineffective predictive models, undermining the validity of your analysis. To avoid overfitting, consider simplifying your models, using cross-validation techniques, or incorporating regularization methods to prevent them from becoming too complex and overfitting the training data.

Ignoring Outliers

Understanding the impact of outliers is crucial in data analysis. An outlier is a data point that significantly differs from the rest of the dataset, potentially skewing statistical analysis and leading to misleading results. Beginners often overlook outliers or exclude them from their analysis without considering their potential influence on the overall findings.

It’s important to note that ignoring outliers can distort your analysis and conclusions, as these data points may carry valuable information or indicate underlying patterns in the dataset. Instead of disregarding outliers, consider exploring the reasons behind their unusual values and evaluating whether they should be retained, transformed, or treated separately in your analysis.

By acknowledging the presence of outliers and understanding their implications, you can improve the robustness and accuracy of your data analysis, leading to more reliable insights and informed decisions based on the complete dataset.

Working with Big Data

Despite the increasing popularity of big data analysis, working with large datasets can be challenging. As a beginner, it is imperative to understand key concepts related to handling big data to ensure efficient analysis. In this chapter, we will explore important considerations for working with big data, including scalability, distributed computing, and cloud-based solutions.

Scalability and Performance

One of the crucial aspects of big data analysis is ensuring scalability and performance. Scalability refers to the system’s ability to handle increasing amounts of data, users, and operations. Performance, on the other hand, focuses on the speed and efficiency of data processing. When working with big data, it is imperative to choose tools and technologies that can scale horizontally to accommodate growing datasets while maintaining high performance levels.

Efficient data processing is key to achieving scalability and performance in big data analysis. Techniques such as parallel processing, distributed computing, and data partitioning can help optimize data processing tasks. By breaking down large datasets into smaller chunks and processing them simultaneously, you can speed up analysis and reduce processing time.

Improving scalability and performance often involves implementing proper data management strategies. This includes optimizing data storage, streamlining data pipelines, and utilizing caching mechanisms. By addressing these aspects, you can enhance the overall efficiency of your big data analysis processes.

Distributed Computing

One of the fundamental principles of handling big data is distributed computing. Distributed computing involves processing data across multiple interconnected nodes or machines. This approach allows for parallel processing and data storage across a cluster of machines, enabling efficient handling of large datasets.

Implementing distributed computing frameworks such as Apache Hadoop or Spark can significantly improve your big data analysis capabilities. These frameworks provide tools for distributed data processing, allowing you to leverage the power of multiple machines to process data in parallel. By distributing computational tasks, you can overcome the limitations of processing large datasets on a single machine.

It is imperative to understand the fundamentals of distributed computing to effectively harness the power of big data. By distributing data processing tasks across multiple nodes, you can achieve higher performance levels and improved scalability in your data analysis workflows.

Cloud-Based Solutions

Data analysis in the cloud offers flexibility and scalability advantages for handling big data. Cloud-based solutions provide on-demand access to computing resources, enabling you to scale your data analysis processes based on workload requirements. Additionally, cloud platforms offer various data storage options and services, allowing you to efficiently manage and analyze large datasets.

Working with big data in the cloud can also help reduce operational costs and improve accessibility to data analysis tools. Cloud services eliminate the need for maintaining on-premises infrastructure, allowing you to focus on data analysis tasks rather than infrastructure management. Moreover, cloud platforms often offer integrations with popular data analysis tools and frameworks, simplifying the deployment of big data solutions.

By leveraging cloud-based solutions for big data analysis, you can enhance scalability, optimize performance, and streamline data management processes. Cloud platforms provide a flexible and cost-effective environment for conducting data analysis tasks, making it easier for beginners to explore the world of big data.

Ethics in Data Analysis

Privacy and Confidentiality

Unlike other forms of analysis, data analysis involves handling large amounts of personal information. The privacy and confidentiality of individuals’ data should always be a top priority when conducting data analysis. It is crucial to ensure that the data being used is anonymized and encrypted to protect individuals’ identities and sensitive information.

The importance of privacy and confidentiality in data analysis cannot be overstated. Unauthorized access to personal data can lead to serious consequences, including identity theft, financial fraud, and invasion of privacy. As a beginner in data analysis, it is important to familiarize yourself with privacy laws and best practices to uphold the ethical standards of the field.

Always remember that behind every data point is a real person with rights to privacy and protection. Respecting and safeguarding individuals’ privacy and confidentiality is not just a legal requirement but a moral imperative that should guide your data analysis practices.

Bias in Data Collection

The issue of bias in data collection is a critical ethical consideration in data analysis. Data collection methods that are biased or discriminatory can lead to skewed results and perpetuate inequalities in society. It is important to be aware of potential biases in the data you are analyzing and take steps to mitigate them.

Data collection bias can occur at various stages, such as sampling, survey design, and data interpretation. Being mindful of your own biases and actively working to minimize them can help ensure that your data analysis is fair and unbiased.

Remember that biased data not only compromises the credibility of your analysis but also has real-world implications. Addressing bias in data collection is crucial for producing accurate and ethical insights that can inform decision-making and drive positive change.

Data analysis can help uncover valuable insights and patterns, but it is not immune to ethical considerations. It is important to approach data analysis with a critical eye and a commitment to transparency and accountability. By upholding ethical standards in your data analysis practices, you can contribute to building trust in the field and ensuring the responsible use of data for the benefit of society.

Transparency and Accountability

Privacy and security are paramount concerns in data analysis, but transparency and accountability are equally important. Being transparent about the sources of your data, the methods used in your analysis, and the limitations of your findings is important for ensuring the integrity and reliability of your work.

Transparent data analysis practices not only build trust with stakeholders but also allow for greater scrutiny and validation of your results. Accountability involves taking ownership of your analysis and being prepared to address any ethical concerns or discrepancies that may arise during the process.

Embracing transparency and accountability in your data analysis not only demonstrates your commitment to ethical standards but also fosters a culture of openness and collaboration in the data analysis community. By holding yourself accountable for the ethical implications of your work, you can help promote ethical practices and responsible data use in the field.

Communicating Insights

Once again, if you want to deepen your understanding of data analysis and enhance your skills, be sure to check out the article ““Demystifying Data Analytics: A Step-by-Step Guide for Beginners”. Communication is a crucial aspect of data analysis. It’s not just about crunching numbers; it’s about interpreting and communicating the insights you uncover. This is where the art of storytelling with data comes into play.

Storytelling with Data

With data analysis, storytelling is more than just presenting numbers and figures; it’s about crafting a narrative that engages your audience and communicates complex insights in a digestible and memorable way. By combining data with compelling visuals and a coherent storyline, you can create a powerful narrative that resonates with your audience. Note, data is only as valuable as the story it tells.

Creating Effective Reports

An effective report is more than just a collection of charts and graphs; it’s a structured and cohesive document that guides the reader through your analysis and insights. When creating reports, consider your audience’s level of technical expertise and tailor your language and visuals accordingly. A well-crafted report should have a clear introduction, a logical flow of information, and visually appealing elements to support your findings.

The key to creating effective reports lies in clarity and conciseness. Avoid overwhelming your audience with excessive data and instead focus on highlighting the most important insights. Use labels and captions to provide context to your visuals, and include a summary or key takeaways section to reinforce your main points. Note, your goal is to make your findings accessible and actionable for your audience.

Presenting Findings to Non-Technical Audiences

Creating a presentation for a non-technical audience requires a different approach. Simplicity and clarity are paramount. Focus on translating complex technical jargon into layman’s terms and use analogies or real-life examples to illustrate your points. Emphasize the impact of your findings and why they matter. Engage your audience by encouraging questions and fostering a dialogue around the data. Note, the goal is to empower your audience to make informed decisions based on your insights.

A successful presentation to a non-technical audience requires not only a deep understanding of the data but also empathy and effective communication skills. Put yourself in your audience’s shoes and anticipate their questions and concerns. Tailor your presentation to address their needs and interests while maintaining the integrity and accuracy of your analysis. By engaging and educating your audience, you can inspire them to act on the insights you provide.

Advanced Data Analysis Techniques

Many beginners in data analysis start with the basics but soon realize there are more advanced techniques that can provide deeper insights. If you want to take your data analysis skills to the next level, consider exploring advanced methods. Note, the journey from data to insights can be challenging but highly rewarding. For a more detailed guide on this topic, check out our blog post on Demystifying Data to Insights: A Practical Guide.

-

Machine Learning

One popular advanced data analysis technique is Machine Learning. This method involves using algorithms to analyze and interpret data, allowing systems to learn and improve from experience without being explicitly programmed. Machine Learning can be used for various tasks such as image recognition, language translation, and even predicting future trends based on historical data patterns.

Predictive Modeling

-

Advanced Predictive Modeling Techniques

Predictive Modeling goes beyond descriptive analytics to forecast future outcomes. Using advanced statistical algorithms, you can predict trends, behavior, and events based on historical data. This technique is important for making informed decisions and strategic planning in various fields such as marketing, finance, and healthcare.

One way to enhance your predictive modeling skills is by exploring ensemble methods, which combine multiple models to improve prediction accuracy. By understanding and implementing advanced predictive modeling techniques, you can unlock valuable insights and make data-driven decisions that drive success in your projects.

Data Mining

-

Modeling Patterns with Data Mining

Data Mining is the process of discovering patterns and relationships in large datasets. It involves using various statistical techniques and machine learning algorithms to extract valuable information from raw data. Data Mining can help you identify hidden patterns, outliers, and trends that can lead to valuable insights for your business or research.

Predictive modeling is a crucial part of data mining, as it involves using historical data to predict future outcomes. By leveraging advanced algorithms and statistical techniques, you can uncover important trends and patterns that drive business growth and innovation.

Staying Up-to-Date

Industry Trends and Developments

After learning the basics of data analysis, it’s important to stay up-to-date with industry trends and developments. An understanding of the current landscape can help you identify opportunities and potential areas for growth in your career. Follow reputable sources such as industry publications, online forums, and social media to stay informed about the latest advancements in data analysis.

Continuing Education and Training

For ongoing growth and development in data analysis, consider enrolling in specialized courses or pursuing certifications. Continuing education can not only enhance your skills but also broaden your knowledge base. Look for online courses, workshops, or seminars that focus on advanced techniques and tools used in the field.

For a more comprehensive education, you might also consider pursuing a graduate degree in data science or a related field. These programs can provide you with in-depth knowledge and hands-on experience that can set you apart in the competitive job market.

Staying Current with New Tools and Technologies

Trends in data analysis are constantly evolving, with new tools and technologies being introduced regularly. It’s crucial to stay current with these advancements to remain competitive in the field. Another way to stay updated is by attending industry conferences and networking events where you can learn about the latest tools and technologies firsthand.

Conclusion

Considering all points, it is clear that data analysis may seem intimidating at first, but with the right approach and mindset, you can certainly master this valuable skill. By breaking down the process into manageable steps, practicing regularly, and seeking guidance when needed, you will gradually build confidence and proficiency in analyzing data effectively. Remember that the key is not to get overwhelmed by the vast amount of information but to focus on understanding the data and drawing meaningful insights from it.

As a beginner in data analysis, it is important to familiarize yourself with various tools and techniques available to aid you in your analysis. Whether it’s learning how to use software like Excel or Python, understanding different types of data visualizations, or exploring statistical concepts, continuous learning and experimentation will help deepen your understanding and improve your analytical skills. Don’t be afraid to make mistakes along the way; they are all part of the learning process and can provide valuable insights for future analyses.

Summing up, by following the tips and strategies outlined in this article, you are well on your way to demystifying data analysis and becoming a proficient data analyst. Remember that practice, patience, and persistence are key to mastering any new skill, including data analysis. Embrace the challenges, stay curious, and keep refining your skills, and you will soon find yourself confidently navigating the world of data and unlocking valuable insights that can drive informed decision-making and propel your career forward.