Many organizations are navigating the ever-evolving landscape of data engineering and analytics, seeking innovative solutions to harness the power of information. By embracing cloud technologies, you can enhance your data capabilities, streamline processes, and gain invaluable insights. In this post, we will explore the transformative potential of cloud computing in data engineering and analytics, equipping you with the tools and knowledge to elevate your data strategy to new heights. Prepare to commence on a journey where the artificial barriers of traditional computing dissolve, allowing your analytical prowess to flourish.

Cloud Technologies Overview

Defining Cloud Computing

The essence of cloud computing lies in its revolutionary ability to provide on-demand access to a shared pool of configurable computing resources—be it networks, servers, storage, applications, or services. This evolution not only allows you to consume computing resources remotely but also enables you to scale them up or down based on your needs. Imagine no longer being constrained by the physical limitations of your hardware; instead, you can leverage the vast and flexible infrastructure offered by cloud services. With this paradigm shift, the notion of how and where you store and process your data has transformed irrevocably.

On a technical level, cloud computing is fundamentally about virtualization and resource pooling. It provides end users—and more importantly, businesses—the flexibility and efficiency they’ve long sought. Rather than investing heavily in physical equipment that requires constant maintenance and upgrade, the cloud permits you to access resources over the internet, renting them as needed. This practice not only reduces capital expenditure but also allows you to redirect your financial resources towards innovation and growth, effectively aligning your IT strategy with your business objectives.

Your engagement with cloud technologies invites a plethora of possibilities. It is a platform for collaboration, speed, and accessibility that far exceeds traditional computing frameworks. Whether you are utilizing infrastructure as a service (IaaS), platform as a service (PaaS), or software as a service (SaaS), you can enjoy unparalleled flexibility, reliability, and efficiency. The cloud tailors itself to various applications, ensuring that you can access the latest technologies without being bogged down by technical complexities or long procurement cycles.

Cloud Service Models: IaaS, PaaS, SaaS

Any discussion of cloud technologies must address the three primary service models that dictate how you can leverage these resources: Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS). Each model offers distinct functionalities and advantages. For example, IaaS provides critical foundational infrastructure and is often used for hosting applications and websites; it mimics on-premises systems in a cloud environment. Conversely, PaaS allows you to build applications without the complexity of maintaining hardware and software layers, offering tools that streamline development while taking away many of the headaches associated with infrastructure management. Finally, SaaS delivers software solutions over the cloud, allowing you to access applications seamlessly without installation processes.

Any organization you run can benefit from comprehending these models in depth, as they dictate the way data engineering and analytics can be effectively implemented in the cloud. Choosing between IaaS, PaaS, and SaaS is contingent upon your specific requirements, your current architectural maturity, and your long-term goals. Not only do these service models harmonize resources to align with your business trajectory, but they also enable you to adopt that technology which will yield the maximum value with the minimum fuss.

Service expectations vary as you navigate through these models. For IaaS, you retain control over your infrastructure while outsourcing hardware management; PaaS offers a balanced middle-ground where you can focus on development rather than maintenance; and SaaS liberates you entirely from backend complications, allowing you to concentrate on productivity. As you assess your organizational needs, understanding these service models will empower you to harness the full potential of cloud technologies in data engineering and analytics.

Data Engineering Fundamentals

Data Ingestion and Processing

It is important to recognize that the landscape of data engineering begins with the vital processes of data ingestion and processing. In the world of cloud technologies, the ability to efficiently gather data from diverse sources, be it structured, semi-structured, or unstructured, is foundational to any advanced data analytics endeavors. You must ensure that the mechanisms you choose for data ingestion—whether they be batch processing, streaming, or a combination—align well with the scale and velocity of the data you intend to work with. This initial step sets the stage for how data flows into your systems, and is critical for maintaining data integrity as you manipulate it through various transformations.

To maximize the effectiveness of data ingestion, you should leverage cloud-native tools that offer scalability and reliability, allowing you to handle fluctuations in data volume without compromising on speed or performance. Technologies like Apache Kafka for real-time data streaming and AWS Glue for batch processing are pivotal in constructing a robust data pipeline. By adopting such tools, you empower your engineering efforts to adapt seamlessly to the ever-changing data landscape, all while minimizing latency. Note, the key lies in not just ingesting data, but preparing it for the analytical processes ahead, ensuring that it is clean, relevant, and easily accessible.

Moreover, the processing aspect cannot be overlooked. Once data is ingested, you need to execute transformations that enhance its usability. This involves filtering, aggregating, and enriching the data to derive meaningful insights that can inform decision-making. Cloud technologies, such as Google Cloud Dataflow or Azure Synapse Analytics, allow you to create data transformation workflows that are not only efficient but also cost-effective. If you position your data engineering efforts strategically during this stage, you will undoubtedly pave the way for advanced analytics that can elevate your organization’s strategic capabilities.

Data Storage and Management

To ensure that your data is not only available but also manageable and secure, you must invest in a robust storage and management framework. The cloud provides a myriad of options—from data lakes to data warehouses—that serve distinct purposes depending on the nature of your analytics workload. For example, if you are storing vast amounts of raw, unstructured data for long-term analysis, a data lake like Amazon S3 or Google Cloud Storage might be your best bet. Conversely, if your focus is on structured data and you require powerful querying capabilities, then a traditional data warehouse such as Snowflake or Google BigQuery is warranted. The choices you make here will directly influence the performance of your data-driven applications.

To facilitate effective data management, you should not only consider the technology itself but also the best practices surrounding data governance. This involves setting policies for data quality, security, and compliance to ensure that your data remains accurate, reliable, and accessible to authorized users. Furthermore, cloud technologies are designed with scalability in mind; hence, you can start with a modest dataset and expand as your analytical needs grow. This inherent flexibility allows you to accommodate future data ingestion without necessitating a complete overhaul of your system architecture.

It is critical to also keep in mind that optimizing data storage and management does not only concern data availability but involves understanding how to categorize, label, and derive utility from your data efficiently. Implementing metadata management practices enables you to provide context and structure to your datasets, making it easier for data scientists and analysts to locate and utilize the information they need. The right strategies in data storage and management not only mitigate risks but also enhance the potential for insights, presenting your organization with a roadmap to informed decision-making and innovation.

Advanced Data Engineering Techniques

Keep in mind that as you probe deeper into the world of data engineering and analytics, adopting advanced techniques is necessary for harnessing the full potential of cloud technologies. The following list outlines some transformative methodologies that will elevate your practices:

- Distributed Computing and Scalability

- Real-time Data Processing and Streaming

- Data Warehousing Solutions

- Data Governance and Quality Management

- Machine Learning Pipeline Automation

To better understand the core elements of these advanced techniques, consider the following breakdown:

| Technique | Description |

|---|---|

| Distributed Computing | Utilizing multiple computers to solve complex problems more efficiently. |

| Scalability | The capability to increase resources to accommodate growth. |

| Real-time Data Processing | Analyzing and processing data immediately as it is created or received. |

| Streaming | Continuously transmitting data for immediate processing and utilization. |

Distributed Computing and Scalability

Distributed computing signifies a paradigm where computing tasks are distributed across multiple systems, optimizing both processing speed and resource utilization. This technique is invaluable, especially in a cloud-based environment where resources are virtually limitless. You can deploy a plethora of virtual machines across various nodes to parallelize computing tasks, which allows for handling extensive datasets while delivering quicker insights. As a result, data processing activities, which once seemed unmanageable, can now be accomplished efficiently with significantly reduced latency.

Moreover, scalability is intricately tied to distributed computing, enabling agility as your data needs evolve. You may find that as your business grows, your infrastructure must keep pace with the surging volume of data. By employing cloud technologies, you can effortlessly scale your computing resources up or down, ensuring that you only pay for what you use. This flexibility allows you to manage peak demands without the burdensome investments associated with on-premises setups.

The interplay between distributed computing and scalability allows you to focus on your core objectives without being hindered by infrastructural constraints. The seamless integration of these concepts not only transforms the way you approach data engineering but also empowers you to derive insights that can lead to informed decision-making. You will find that embracing these techniques places you in a better position to navigate the complexities inherent in today’s data-driven landscape.

Real-time Data Processing and Streaming

Processing data in real-time introduces a fresh paradigm that gives your analytics a significant edge. In an era where immediate insights are prized, you cannot afford to rely on batch processing alone. By adopting real-time data processing techniques, you can manage data feeds and analyze streams on the fly. This adaptability ensures that you capture trends as they emerge, allowing your business to respond to changes with agility and precision. Whether you’re monitoring system performance, tracking user behavior, or managing supply chains, real-time analytics will amplify your decision-making capabilities.

Your ability to extract meaningful insights from continuous data streams relies on advanced stream processing frameworks. With tools like Apache Kafka or Apache Flink, you can ingest, analyze, and act on data as it arrives, transforming raw information into valuable insights almost instantaneously. Establishing such infrastructure enables you to set up complex event processing, where you can detect patterns and anomalies as they happen—necessaryly turning data into action within mere seconds.

Streaming is a vital aspect of modern data engineering that facilitates innovation. It allows you to keep abreast of ever-evolving market dynamics while enabling a quicker time-to-insight. The continuous flow of data not only enriches your analytics but also fosters a culture of responsiveness within your organization. Engaging with these advanced data processing techniques positions you at the forefront of data-driven decision-making and ensures that your analytics remain relevant and impactful.

Cloud-based Data Analytics

After the dawn of a digital age replete with vast amounts of data, the cloud emerged as a formidable solution for streamlining the complex dynamics of data analytics. Through the expansive capabilities of cloud-based resources, organizations like yours can access powerful tools for data processing, storage, and analysis without the burdens associated with traditional on-premises infrastructure. This democratization of technology enables you to harness advanced analytics capabilities that empower decision-making processes and improve operational efficiency. As you investigate into the plethora of cloud services available, it becomes necessary to understand how they can be leveraged for enhanced data analytics.

Data Warehousing and Business Intelligence

For organizations keen on transforming raw data into actionable insights, a robust data warehousing solution is paramount. Cloud-based data warehousing platforms offer you the ability to consolidate disparate data sources into a single, cohesive repository while maintaining scalability and flexibility. With advanced functionalities such as automated data ingestion, governed access, and user-friendly interfaces for analysis, cloud data warehousing allows you to focus on deriving insights rather than grappling with complex infrastructure management. As you migrate towards a cloud-centric strategy, aligning your data warehouse with your business intelligence tools will create a seamless conduit for your data journeys.

Moreover, cloud-based business intelligence solutions empower you to visualize your data in real-time, fostering a culture of informed decision-making throughout your organization. By integrating tools that support natural language processing and drag-and-drop analytics, you can democratize data access, enabling even non-technical stakeholders to engage with the data meaningfully. As you evolve and adapt to changing market conditions, the adaptability of cloud BI platforms ensures that your analytical capabilities can scale in tandem with your business growth while providing you with the agility required to respond swiftly to new opportunities and challenges.

Furthermore, these cloud solutions are designed with collaborative work environments in mind. As teams become increasingly dispersed and remote, the ability to share insights efficiently becomes paramount. Cloud data warehouses and BI tools offer real-time collaboration features, allowing your teams to work together seamlessly, regardless of their location. This fosters an environment where insights can be rapidly shared and acted upon, enhancing overall productivity and innovation within your organization.

Machine Learning and Predictive Analytics

To unlock the potential of your data for future-oriented decision-making, embracing machine learning and predictive analytics is necessary. Cloud-based platforms open the door to advanced algorithms and models that you can deploy with relative ease. Using structured and unstructured data in your cloud environment, you can create predictive models that provide deeper insights into customer behaviors, operational efficiencies, and market trends. By leveraging these enhanced analytical capabilities, you gain a strategic advantage that allows you to stay ahead in an ever-changing business landscape.

By employing machine learning techniques, your organization can progress from hindsight analytics to foresight capabilities. Through careful training of your models using comprehensive data sets, you enhance accuracy in predictions and can recognize patterns that your traditional analytical methods might miss. This foresight equips you, as a decision-maker, with the insights needed to anticipate changes, refine strategies, and optimize operations. Utilizing cloud resources, these predictions can be adjusted and corrected in real-time, ensuring that you are always acting on the most current and relevant data.

Machine learning doesn’t merely support traditional analytics; it augments and redefines it. By harnessing cutting-edge technologies available in the cloud, you can engage in iterative learning and continuous improvement of your analytics processes. As algorithms learn from incoming data, the precision of predictions and outcomes becomes increasingly refined, allowing you to establish a proactive stance in your analytics strategy. The elastic nature of cloud resources also facilitates extensive experimentation with various machine learning approaches, enabling you to discover novel solutions tailored to meet your organization’s unique needs.

Cloud Providers for Data Engineering and Analytics

All the geospatial wonders of modern computing have coalesced in the cloud, fundamentally altering the landscape of data engineering and analytics. In this chapter, you will explore into the extraordinary capabilities offered by leading cloud providers that have positioned themselves as stalwarts in data-centric solutions. Among them, Amazon Web Services (AWS) and Microsoft Azure stand out as pioneering forces that empower you to unleash unprecedented levels of computational prowess and analytical insight. Their myriad offerings extend far beyond mere storage and computation, presenting you with a suite of advanced features designed to meet the specific needs of your data projects.

Amazon Web Services (AWS)

One of the most notable advantages of Amazon Web Services (AWS) lies in its extensive ecosystem designed specifically for data engineering tasks. As you navigate through AWS, you will find a wealth of services dedicated to catering to diverse data needs, from data ingestion to storage, processing, and ultimately analytics. Services such as Amazon S3 (Simple Storage Service) and Amazon Redshift provide you with the tools needed for both scalable storage solutions and powerful data warehousing, respectively. With these services at your disposal, you can seamlessly manage the influx of massive data streams while ensuring swift access for your analytical processes, fundamentally enhancing your organization’s ability to harness insights in real-time.

Moreover, AWS continues to innovate in the domain of data processing with services like AWS Glue and Amazon EMR (Elastic MapReduce), which empower you to build robust data pipelines and process large datasets efficiently. These tools emphasize serverless architecture, drastically reducing the operational overhead typically associated with data engineering. You can easily automate extract, transform, and load (ETL) processes, seamlessly integrating diverse data sources and preparing them for analysis. This agility allows you to focus more of your intellectual energies on deriving insights rather than managing the cacophony of data operations.

In addition to these foundational layers, AWS incorporates machine learning and analytics frameworks through services like Amazon SageMaker and Amazon QuickSight, allowing you to implement predictive models and visualize your data effortlessly. The interconnectedness of these services ensures that insights derived from your data processes not only remain actionable but are also presented in compelling ways that facilitate informed decision-making within your organization. In advanced data engineering and analytics, AWS undeniably champions the notion of enmeshing complex capabilities into accessible formats.

Microsoft Azure

An integral player in the cloud industry, Microsoft Azure offers a formidable arsenal of services tailored for data engineering and analytics. Your exploration of Azure will reveal a comprehensive suite of tools that empower you to design intricate data pipelines, facilitate large-scale data processing, and derive actionable intelligence. With Azure Data Lake Storage, you can not only store vast volumes of unstructured data but also leverage advanced analytics services like Azure Synapse Analytics and Azure Databricks for powerful data integration and interactive querying. This seamless architecture allows you to support diverse analysis methodologies, whether you are performing ad-hoc analytics or conducting deep learning exercises.

Azure also excels in facilitating integration with various existing Microsoft products and services, such as Power BI and Azure Machine Learning. This ecosystem creates an advantageous environment for you to rapidly prototype and deploy applications that analyze data, predict trends, and generate visual insights. Whether you are a data engineer or an analyst, you will find yourself equipped with the capabilities to collaborate effectively across teams and share findings swiftly. Furthermore, the capacity to integrate with other cloud platforms and on-premise data sources reinforces Azure’s role as the universal hub of your data operations.

Additionally, Azure’s commitment to security and compliance cannot be overlooked. As you engage with your data in this cloud environment, you will find that Azure incorporates advanced security protocols designed to protect sensitive information while ensuring compliance with global standards and regulations. This focus on security instills confidence as you tackle your most pressing data challenges, encouraging a culture of innovation without compromising on safety. Whether you choose AWS or Azure, your foray into cloud technologies will unveil a world rich with potential, encouraging you to push the boundaries of what is routinely achievable in data engineering and analytics.

Security and Compliance in Cloud-based Data Engineering

Many enterprises are turning to cloud-based platforms to harness the power of advanced data engineering and analytics, but this transition also raises imperative questions about security and compliance. In this regard, understanding the nuances of data encryption and access control becomes crucial. These two elements not only fortify your data against unauthorized access but also pave the way for more effective management of sensitive information. By encrypting your data, whether it’s resting in storage or being transmitted across networks, you add an indispensable layer of protection that deters cyber threats. The encryption process converts your raw data into a scrambled format, requiring specific decryption keys to make it readable again. This means that even in the unfortunate event of a data breach, your sensitive information remains elusive to malicious actors.

Access control is another pillar of robust cloud security. It involves defining who can access your data, under what conditions, and what actions they may perform with that data. By implementing role-based access control (RBAC) or attribute-based access control (ABAC), you can precisely customize access permissions that align with your organization’s operational needs. This not only enhances your data security but also minimizes the potential for human error—often a weak link in the data management chain. Clearly delineating roles and responsibilities, alongside stringent authentication measures like multi-factor authentication, guarantees that only the right individuals engage with your sensitive data, further reinforcing your cloud security posture.

Moreover, regular auditing of access logs can serve as a proactive measure to identify any anomalous activities. This ensures that data safety remains a repeating theme in your cloud strategy. Continuous monitoring of data access patterns can help you identify potential vulnerabilities or insider threats before they manifest into larger issues. Thus, by embracing a comprehensive approach that includes both encryption and stringent access control, you can effectively safeguard your data while meeting organizational objectives in the cloud.

Regulatory Compliance and Governance

On the topic of regulatory compliance and governance, you must be acutely aware that policies and guidelines, such as the General Data Protection Regulation (GDPR) and the Health Insurance Portability and Accountability Act (HIPAA), compel organizations to treat data with the utmost integrity and care. These regulations have been designed to protect consumer rights and personal information, emphasizing the necessity for transparent data usage and robust security measures. Non-compliance can lead to significant financial penalties and damage to your brand’s reputation. Therefore, integrating compliance into your data engineering processes is not merely an option; it is a requirement that lays the groundwork for ethical and responsible data management practices.

Plus, leveraging cloud technologies offers automated compliance tracking tools that notify you of any potential violations in real-time. These tools can be instrumental in adjusting your procedures and policies to align with regulatory demands, ensuring that your organization remains compliant while you continue to innovate. Additionally, strong governance frameworks that define how data is collected, used, and shared will underpin your broader compliance strategy. Implementing a data governance committee can help ensure continual oversight and adaptation to changes in regulatory landscapes, streamlining the responsibilities across various teams within your organization. Ultimately, mastering the intricacies of regulatory compliance and governance will empower you to navigate the cloud landscape confidently, all while wielding the full power of your data assets securely.

Cloud-based Data Integration and Interoperability

To maximize the benefits of cloud technologies for data engineering and analytics, you must consider the synergy between various data sources and systems within your organization. Cloud-based data integration offers a framework that fosters seamless interoperability, allowing you to effectively connect disparate data sources, applications, and services. This process creates a unified environment where data flows effortlessly, giving you real-time insights and improving decision-making capabilities. By implementing cloud-based integration solutions, you’ll not only enhance your data workflow but also facilitate collaboration among cross-functional teams who rely on consistent and accurate data for their operations.

Data APIs and Microservices

The role of Data APIs and microservices in cloud-based integration cannot be overstated. By leveraging RESTful APIs, you enable your applications to communicate effortlessly, transmitting data in a structured and easily consumable format. This opens the door to a world of interoperability, as you can integrate third-party services and tools with your existing infrastructure. Imagine being able to pull data from multiple sources in real-time, or push analytics to diverse platforms through simple API calls. This flexibility not only enhances your data landscape, but also empowers you to tailor solutions that best fit your operational needs.

The beauty of microservices architecture lies in its scalability and resilience. You can build modular applications that are more agile and can easily adapt to changes in the business landscape. Each microservice handles a specific task, allowing you to upgrade or replace individual components without disturbing the entire system. This means that when you need to introduce new data sources or functionalities, you can do so effortlessly while minimizing downtime or performance issues. Ultimately, this level of flexibility makes it easier for you to innovate, create, and test new data analytics solutions that keep you ahead of the curve.

Moreover, incorporating Data APIs and microservices promotes a culture of reuse and standardization across your organization. By developing a library of well-defined APIs, you establish a set of guidelines for how data should be accessed and manipulated. This not only reduces redundancy but also ensures that your entire team follows best practices when it comes to data integration. Consequently, you’ll find that your data ecosystem becomes more cohesive and easier to manage, fostering a data-driven culture where insights can be gleaned quickly and effectively.

Cloud-based ETL and Data Migration

Integration in cloud-based ETL (Extract, Transform, Load) and data migration is a necessity in today’s fast-paced digital world. Cloud platforms can automate the ETL process, allowing you to extract data from various sources, transform it to meet analytical requirements, and load it into your desired cloud data warehouse or lake. This sophisticated approach allows you to efficiently handle vast volumes and varieties of data as they flow into your system. In this way, you can focus on deriving insights that drive your business’s strategic goals instead of grappling with tedious data preparation tasks.

Plus, the ease of cloud-based ETL and data migration means you can conduct these processes without the heavy lifting often associated with traditional, on-premises solutions. Cloud services provide built-in scalability, so as your data grows, so can your processing capabilities. You’ll benefit from elastic resources that enable you to adjust capacity dynamically based on your workloads, ensuring that you can handle spikes in data volume without incurring unnecessary costs. This flexibility allows you to implement a truly agile approach to your data workflows, facilitating innovation and experimentation in your analytics initiatives.

Advanced Analytics and Visualization

Your ability to harness advanced analytics and visualization techniques will significantly amplify the power of your data engineering initiatives. As data continues to grow in complexity and volume, leveraging sophisticated methods for analysis and visualization can transform raw information into insightful narratives that drive decision-making. This chapter will guide you through some pivotal aspects of advanced analytics, focusing on predictive modeling and data visualization, both of which are instrumental in deriving actionable insights from your data.

- Utilizing interactive dashboards for real-time insights

- Implementing advanced analytics tools for predictive insights

- Employing machine learning for data-driven decision-making

- Integrating storytelling techniques into data presentation

Types of Advanced Analytic Techniques

| Technique | Description |

|---|---|

| Predictive Analytics | Analyzing historical data to predict future outcomes and trends. |

| Data Visualization | Representing data in a visual context to make complex data more accessible and understandable. |

Data Visualization and Storytelling

To truly resonate with your audience, data visualization and storytelling must become interconnected components of your analytics strategy. Your audience is no longer satisfied with tables filled with data; they crave engaging narratives that contextualize that information. By employing techniques such as infographics, animated visualizations, and interactive dashboards, you can present complex datasets in an intuitive and accessible manner. The key lies in assembling data points that tell a compelling story—one that drives home your message and allows stakeholders to make informed decisions.

To achieve this, you should focus on elements of design that enhance comprehension and retention of information. The thoughtful application of colors, shapes, and layouts can significantly impact how the message is perceived. Avoid clutter—simplifying your visualizations to highlight key figures while minimizing distractions will ensure that your audience can extract insights quickly. By consolidating your findings into coherent narratives, you’re not just sharing data; you are championing a cause backed by empirical evidence, which is decisive in persuading stakeholders.

To maximize the effectiveness of your storytelling approach, consider incorporating real-world examples and scenarios that your audience can relate to. When you present data within a familiar context, you create a connection that enhances the emotional impact of your insights. This creates deeper engagement, as people are more likely to invest in a story that resonates with their own experiences. Ultimately, a well-crafted visualization coupled with a compelling narrative can catalyze action, drive innovation, and foster a culture of data-driven decision-making within your organization.

Predictive Modeling and Simulation

Analytics is the compass that guides you through a data-driven landscape, allowing you to anticipate future trends and potential outcomes. In predictive modeling, you employ statistical techniques and algorithms to sift through historical data, recognizing patterns that can inform your projections. The primary goal here is to convert uncertainty into informed probabilities, giving you a competitive advantage in fields ranging from finance to marketing and beyond. By developing robust predictive models, you can leverage data not just to understand the present but to forecast potential future states with remarkable accuracy.

Analytics provides a framework to simulate various scenarios, empowering you to evaluate the implications of different strategies before implementation. This capability is particularly beneficial when considering resource constraints or market dynamics, as it allows you to navigate potential pitfalls efficiently. By running simulations, you can measure performance under varying conditions, refining your models continuously based on real-time feedback and outcomes. As a result, the insights you derive can guide critical operational decisions, optimizing resource allocations and improving performance metrics.

Predictive modeling is a journey characterized by iterative refinement and reassessment. You’ll find that not all models yield the same level of accuracy or applicability, and thus, it’s important to employ cross-validation techniques to assess model robustness. The predictive insights you glean will not only transform your approach to data analysis but also foster a proactive mindset towards risk management and strategic planning. It is crucial that as you build these models, you maintain a clear definition of your objectives, continually aligning your analytics efforts with business goals to ensure that your findings are impactful and actionable.

Predictive modeling has the power to illuminate pathways previously obscured by uncertainty, transitioning you from reactive to proactive decision-making. By incorporating sophisticated algorithms and machine learning techniques, you can refine your models continuously, improving their predictive accuracy over time. Consider also the amalgamation of varied data sources to enhance the reliability of your predictions, integrating structured and unstructured data as needed. As such, predictive analytics and simulation not only serve as powerful tools but also lay down the groundwork for a culture of continuous improvement within your organization, enabling you to adapt to changing circumstances and seize new opportunities.

Cloud-based Data Governance and Quality

Once again, the world of data governance and quality is being transformed by the advancement of cloud technologies. Gone are the days of traditional data management practices that often led to fragmented policies and inconsistent data quality. In a cloud-based environment, you have the ability to implement comprehensive data governance frameworks that ensure your data is accurate, secure, and compliant with regulations. By leveraging cloud platforms, you gain the agility and scalability needed to deploy governance strategies that align with the rapid evolution of data analytics technologies, enabling you to enhance your organization’s decision-making capabilities significantly.

Data Profiling and Quality Metrics

Any effective data governance strategy must prioritize data profiling and the establishment of quality metrics. These metrics serve as the backbone of your data management framework, allowing you to monitor the health of your data continuously. As you utilize cloud technologies, you can automate the profiling process, employing tools that scan your datasets to identify anomalies, redundancies, and inconsistencies automatically. This approach not only saves you time but also elevates the quality of insights derived from your data, as you can now trust that your datasets are in peak condition.

Moreover, in the era of big data, the complexity of sourcing and storing data from varied platforms can lead to significant quality issues. The cloud provides you with the capability to implement dynamic quality assessment measures that are data-specific and context-sensitive. Through the use of advanced algorithms and machine learning techniques, you can continuously refine your quality metrics based on user feedback and evolving business needs. As a result, your data governance becomes a living entity, adapting to changes in data landscapes while ensuring that quality remains paramount.

Ultimately, the integration of cloud-based data profiling and quality metrics allows you to transform your organization from a reactive to a proactive stance regarding data management. By establishing a robust system for monitoring and controlling your data, you empower your teams to make informed decisions based on reliable information. This not only increases operational efficiency but also lays the groundwork for driving innovations that can propel your business forward.

Data Lineage and Provenance

To fully comprehend the value of your data, you must research into the realms of data lineage and provenance. Understanding where your data comes from, how it has transformed across various systems, and its journey throughout your analytical processes grants you profound insights that can inform governance policies and strategies. The cloud excels in providing tools that facilitate this transparency through visual mapping and tracking of your data flows. By preserving comprehensive records of your data’s origin and changes, you not only enhance data quality but also fortify your compliance initiatives.

Moreover, as your data lineage becomes traceable and readily available, you will find that accountability within your teams increases. When you know the trail of your data—from capture through transformation to storage—the responsibility for maintaining its integrity becomes clearer. This practice enables stakeholders to not only understand the current state of the data but also to evaluate the potential implications of any changes being proposed. In this way, cloud technologies empower you to foster a culture of data stewardship within your organization, where every team member takes ownership of the quality and governance of data they utilize.

Provenance, in the data context, refers to the detailed history of your data, including its origins, transformations, and all the processes it has undergone within your systems. By effectively capturing provenance details through cloud-based solutions, you arm yourself with the ability to conduct thorough audits, create more consistent compliance reports, and ultimately build trust with your stakeholders. Embracing such practices not only safeguards against potential data-related pitfalls but also enhances the overall credibility of your data-driven initiatives.

Scalability and Performance Optimization

Despite the rapid evolution of data analytics and engineering, the challenges of scalability and performance optimization remain critical for achieving optimal results. With the vast amounts of data generated every day, organizations must be equipped with solutions that can adapt to varying workloads while ensuring that performance does not falter. One way to accomplish this is through the implementation of cloud-based technologies, which provide the important tools and architectures to efficiently handle data pipelines and analytics processes. By leveraging the power of the cloud, you can dynamically adjust your resources to meet fluctuating demands without incurring unnecessary costs, ensuring that your analytics capabilities keep pace with the growth of your data.

Cloud-based Auto-scaling and Load Balancing

One of the most potent advantages of cloud computing is its capacity for auto-scaling and load balancing. These features empower your organization to automatically adjust processing power and resources in real-time, in response to varying workloads. When the demand for data processing spikes, the cloud infrastructure can allocate additional resources, ensuring that your systems can handle the increased load without degradation in performance. Conversely, when demand falls, the system can reduce the resources allocated, allowing you to optimize costs while maintaining necessary performance levels. As a result, your focus can remain on interpreting insights and driving data-driven strategies, rather than worrying about infrastructure limitations.

Moreover, cloud providers commonly offer native load balancing services that evenly distribute workloads across servers or instances. This feature is crucial in preventing any single resource from becoming a bottleneck, thereby enhancing your system’s overall efficiency. By optimizing how requests are handled, you can ensure that your analytics operations remain smooth and uninterrupted, even at unprecedented scales. It allows your data engineers and analysts to retrieve data with minimal latency, facilitating timely decision-making and fostering a culture of agility within your organization.

It’s important to utilize these auto-scaling and load-balancing features to optimize your architecture continuously. You can set metrics and thresholds that trigger these mechanisms based on your specific use cases and requirements. For example, if you recognize a pattern of increased data load during specific hours, creating preemptive auto-scaling rules can ensure your resources are ready before those demands hit. Thus, not only do you enhance performance, but you also maintain an agile and resilient system capable of adapting to your data’s lifecycle.

Caching and Content Delivery Networks

Autoscaling: in a world dominated by big data, the importance of efficient data retrieval can never be understated. Caching and Content Delivery Networks (CDNs) are significant strategies that help in enhancing performance by minimizing latency and reducing server load. By temporarily storing copies of frequently accessed data, caching allows you to deliver content quickly to users and data analysts alike, thus optimizing their experience. Instead of repeatedly querying database systems that may become bottlenecks, you can serve data directly from the cache, significantly improving response times and system throughput. This streamlined access to data leads to faster analytics and insights, enabling better decision-making in real-time.

Content Delivery Networks provide an additional layer of optimization by distributing content across a network of geographically located servers. By utilizing a CDN, you can bring data as close to your users as possible, further reducing latency and ensuring that large data sets are accessible swiftly. This is particularly invaluable for organizations with a global footprint, where data retrieval speed is critical to maintaining user engagement and satisfaction. As an organization, adopting both caching and CDNs can transform the way you approach data delivery, empowering your analytics pipelines with speed and reliability.

Content strategies that actively incorporate caching and CDNs foster a data architecture where responsiveness becomes a given rather than an afterthought. In such systems, your data engineers become architects of performance, constructing pathways that not only store and deliver data effectively but also anticipate and respond to user needs in real-time. By focusing on these strategies, you pave the way for an analytics ecosystem that is not only scalable but also finely tuned for performance, setting the stage for deeper insights and informed business decisions.

Cost Optimization and Resource Management

Unlike traditional data engineering environments that often come with fixed costs and unpredictable expenses, cloud technologies offer a flexible framework tailored precisely to your organization’s data needs. In this landscape, understanding the nuances of cost management is crucial for ensuring that your cloud projects remain budget-friendly while still delivering the cutting-edge analytics your business demands. The capability to predict and plan for costs not only enables peace of mind but also empowers your organization to allocate resources more effectively, maximizing your investment in cloud infrastructure.

Cloud Cost Estimation and Budgeting

To navigate the complexities of budgeting in a cloud environment, you must first employ robust strategies for cost estimation. Engaging in a granular analysis of potential expenses—including compute resources, data storage, and network egress—allows you to develop realistic financial forecasts. Utilizing specialized cloud cost calculators and third-party budgeting tools can provide insights into how different service configurations will impact your overall spending. Taking the time to assess the costs associated with various services ensures you build your cloud strategy on a sound financial foundation, avoiding unexpected spikes in expenditures that could derail your analytics initiatives.

Moreover, part of your budgeting strategy should include a clear understanding of the pricing models set forth by your cloud provider. Familiarizing yourself with pay-as-you-go, reserved instances, and spot instances can help you select the most economical route for your data engineering projects. You should also consider the temporal aspects of your projects; for instance, if you anticipate needing resources only for a short duration, leveraging spot instances or temporary credits can significantly minimize costs. The key is to cultivate foresight and adaptability in your budgeting approach, which will pay dividends as your organization scales its data operations.

Lastly, it is imperative that you consistently monitor your spending against your budget. By setting up alerts and using dashboards provided by most cloud providers, you can keep a close eye on your resource usage. Frequent reviews will not only help you stay within your financial boundaries but can also illuminate trends and usages that may merit adjustments in your overall strategy. This iterative process ensures that your budget remains aligned with both current and future cloud needs, making certain that you are never caught off guard by budget overruns.

Resource Utilization and Right-sizing

Resource utilization and right-sizing are integral elements of effective cloud cost optimization. Resource utilization refers to how efficiently your deployed resources—such as virtual machines, databases, and storage—are being used. To ensure that you are maximizing your return on investment, routinely evaluating the performance and usage patterns of your resources is critical. By monitoring various metrics such as CPU usage, memory consumption, and network throughput, you can gain insights into whether your resources are over-provisioned, under-utilized, or optimized for your workloads.

Resource management extends beyond merely identifying over-provisioned instances; it requires a proactive approach to right-sizing your cloud infrastructure. Right-sizing involves adjusting your cloud resources to fit your current needs, ensuring that you are not paying for excess capacity that is seldom used. If a virtual machine is routinely operating at only 20% capacity, transitioning to a smaller instance type can yield significant cost savings without compromising performance. This meticulous attention to resource optimization ensures that you align your infrastructure with your actual consumption, providing a direct pathway to enhanced efficiency.

Rightsizing involves a continuous process of evaluating your workloads and aligning them with the appropriate resources. This iterative assessment informs decisions regarding scaling up or down based on changing demands, contributing to long-term sustainability in your cloud operations. By routinely refining your approach to resource utilization, you not only control your expenses but also enhance your operational performance across your data engineering and analytics efforts.

Cloud-based Data Engineering Tools and Frameworks

Many organizations today strive to harness the power of cloud technologies for data engineering and analytics, yet navigating the myriad of tools and frameworks available can be a daunting task. This chapter investigates into two significant components in this space: Apache Spark and Hadoop, and cloud-based data pipelines and workflows. These technologies are at the forefront of data engineering, providing you with the capabilities to process, analyze, and visualize massive datasets efficiently. Understanding these tools will not only enhance your data engineering skills but fundamentally transform the way you approach data analytics.

Apache Spark and Hadoop

An ever-evolving digital landscape necessitates robust frameworks for effective data processing, and Apache Spark and Hadoop have emerged as frontrunners in this realm. Apache Spark is revered for its in-memory computation capabilities, enabling lightning-fast data processing, which can be especially advantageous when analyzing large datasets in real-time. With Spark’s versatile APIs and support for multiple programming languages, you are equipped to utilize the framework with the skill set you already possess while leveraging its powerful features for batch and stream processing. This flexibility enhances your ability to build sophisticated data pipelines that meet various business needs swiftly.

Hadoop, on the other hand, offers an invaluable platform for distributed data storage and processing. Its Hadoop Distributed File System (HDFS) allows for the storage of terabytes to petabytes of data across clusters of machines, catering to scalability without compromising on accessibility. Although Hadoop operates at a slower pace than Spark, its approach to fault tolerance and data redundancy ensures that your data remains safe and readily available even in the event of system failures. Integrating these two frameworks can significantly amplify your data engineering capabilities, providing the best of both worlds—speed and durability in handling massive datasets.

By combining the strengths of Apache Spark and Hadoop, you as a data engineer can architect powerful solutions that address intricate analytics challenges. The ecosystems surrounding these frameworks are rich with libraries and community support, allowing for instant scalability and a collaborative environment. In the cloud context, the ability to run Spark jobs directly within services like Amazon EMR or Google Cloud Dataproc means that you can tap into powerful computational resources on-demand. Ultimately, this empowers you to focus on to better insights and data-driven decisions rather than getting bogged down by infrastructure complexities.

Cloud-based Data Pipelines and Workflows

Apache NiFi, AWS Glue, and Google Cloud Dataflow are illustrative examples of cloud-based data pipeline solutions that empower your data engineering workflows. These tools facilitate the movement, transformation, and orchestration of data in seamless pipelines, allowing you to build workflows that accommodate the continuous influx of information from various sources. As a multifaceted framework, Apache NiFi provides an intuitive interface with the capacity for dynamic data routing, and the ability to manage data flow at every stage, from creation to consumption. This not only fosters efficiency but also enables you to maintain high standards of data governance throughout the lifecycle.

With cloud-based architectures, the ability to automate data ingestion and processing becomes paramount. AWS Glue integrates well with Amazon’s ecosystem, allowing for serverless processing where you can quickly turn your attention to building data models rather than managing servers and infrastructure. Meanwhile, Google Cloud Dataflow’s ability to handle both stream and batch processing within a unified model significantly simplifies the development and management of complex data workflows, ensuring that the data you utilize in your analysis is both timely and relevant. Each of these platforms aligns perfectly with the principles of flexibility and scalability, allowing you to adapt your pipelines as your data needs evolve.

For instance, by leveraging tools like Apache NiFi, you can create a highly customizable data ingestion architecture that captures information from IoT devices, social media, and other disparate sources in real-time. This immediate access to information opens up avenues for innovative analytics and rapid decision-making. As you invest in understanding these tools, their workflows, and the ways in which they can be integrated into your cloud environments, you will develop a mastery of the data engineering landscape that will set you apart in a world where information is the new fuel for innovation. Your journey through the cosmos of cloud technologies and frameworks will undoubtedly reshape your approach to data engineering as you position yourself at the cutting edge of analytics capabilities.

Emerging Trends and Future Directions

Edge Computing and IoT Analytics

Your journey into advanced data engineering cannot overlook the paradigm shift ushered in by edge computing and the pervasive influence of the Internet of Things (IoT). For organizations worldwide, the deployment of IoT devices is generating an immense volume of data at an unprecedented speed, which necessitates not only efficient data processing but also insightful analytics close to the data source. This emerging trend allows for real-time data processing and analytics at the edge of the network, dramatically reducing latency and bandwidth consumption, while also enhancing the responsiveness of applications. You can envisage scenarios where critical decision-making occurs instantaneously, enabling industries such as healthcare, manufacturing, and transportation to operate more efficiently and effectively than ever before.

Furthermore, as more devices become interconnected, your ability to harness the power of such data hinges upon sophisticated analytics capabilities that can handle vast streams of information generated at the edge. With the growth of edge computing, you should consider how modern architectures can be designed to facilitate distributed data processing. These architectures incorporate advanced machine learning algorithms that enable predictive maintenance in manufacturing or real-time health monitoring in the medical field. You may find that integrating edge computing with your data engineering practices leads to significant improvements in agility and reliability while providing actionable insights in real-time.

Your strategic implementation of IoT analytics, enhanced by edge computing technologies, opens the door to new business models and possibilities. By leveraging the insights gained from processing data at the edge, you can create untouched opportunities for innovation, improve user experiences, and optimize operational efficiency. As you navigate this frontier, remember that staying informed about technological advancements in this space—such as the development of lightweight machine learning models specifically designed for edge devices—will be pivotal in maximizing the benefits of IoT analytics in your data engineering and analytics initiatives.

Serverless Computing and Cloud Functions

With the rapid evolution of cloud technologies, serverless computing is emerging as a game changer for advanced data engineering and analytics. This architecture allows you to focus on writing code instead of managing the infrastructure that runs it, freeing you from the complexities of provisioning and scaling servers. Imagine the efficiency and agility your projects could attain when you only pay for the resources consumed during the execution of your code. Serverless computing enables you to quickly deploy applications and respond swiftly to varying workloads, effectively meeting the demands of modern data-intensive environments. You can leverage cloud functions to automate data processing tasks, trigger analytics workflows, or even manage data pipelines—all without the burden of server management.

Moreover, embracing serverless architecture unlocks the potential for increased collaboration and innovation. With serverless platforms, you can dynamically integrate services from different providers—be it databases, messaging systems, or AI models—creating comprehensive data ecosystems that propel your analytics capabilities to new heights. As you engage with your data landscape, you will appreciate how serverless computing facilitates the rapid iteration of ideas and prototypes, leading to faster time-to-market for your products and services. Such transformative practices encourage a culture of experimentation, allowing your teams to adopt a ‘fail fast and learn’ approach that is vital for driving innovation in data engineering.

Understanding serverless computing’s promise entails recognizing its ideal use cases. While it may not suit every scenario, such as long-running processes or complex compute-intensive applications, it stands out in event-driven architectures, microservices, and APIs. Your comprehension of these distinctions can empower you to make informed decisions when architecting solutions in your organization. The potential for growth is abundant, as serverless computing continues to mature, offering functionalities and integrations that could redefine your approach to data engineering. Your next steps in embracing this innovative architecture could very well dictate the competitive edge you carve out in the world of data analytics.

To wrap up

With these considerations in mind, it is vital for you to recognize that the integration of cloud technologies into your data engineering and analytics practices is not merely a passing trend but a transformative approach that can redefine the capabilities of your organization. By leveraging cloud infrastructures, you position your enterprise to harness unprecedented scalability, flexibility, and efficiency. The vast ecosystems offered by cloud providers enable you to streamline the management of your data pipelines and enhance your analytical techniques, all while maintaining a sharp focus on cost-effectiveness. Embracing these advancements can lead to compelling insights that drive informed decision-making and strategic growth in today’s fast-paced digital landscape.

Your journey toward mastering cloud technologies will also necessitate an understanding of the underlying paradigms that govern the relationships between data sources, processing methods, and analytical frameworks. You will discover that utilizing cloud services enables you to adopt modern methodologies such as machine learning and artificial intelligence. These methodologies are vital for unearthing hidden patterns in your data, providing you with the tools necessary to forecast trends and inform your business strategy proactively. As you probe deeper into advanced data engineering, take advantage of specialized training and resources available online to further elevate your skill set and enhance your understanding of these intricate yet vital processes.

Finally, as you begin on this path, do not overlook the importance of community and collaboration within cloud technologies. Engaging with fellow professionals will expand your perspective and introduce you to novel approaches and best practices that can significantly improve your outcomes. Explore further insights about this transformative journey by visiting Harnessing the Power of Cloud for Data Engineering and Analytics. Your commitment to harnessing the possibilities of cloud technologies will not only place you at the forefront of technological advancement but will also empower your organization to thrive in an increasingly data-driven world. Embrace the future; the possibilities are boundless.

FAQ

Q1: What are the key benefits of using cloud technologies for data engineering and analytics?

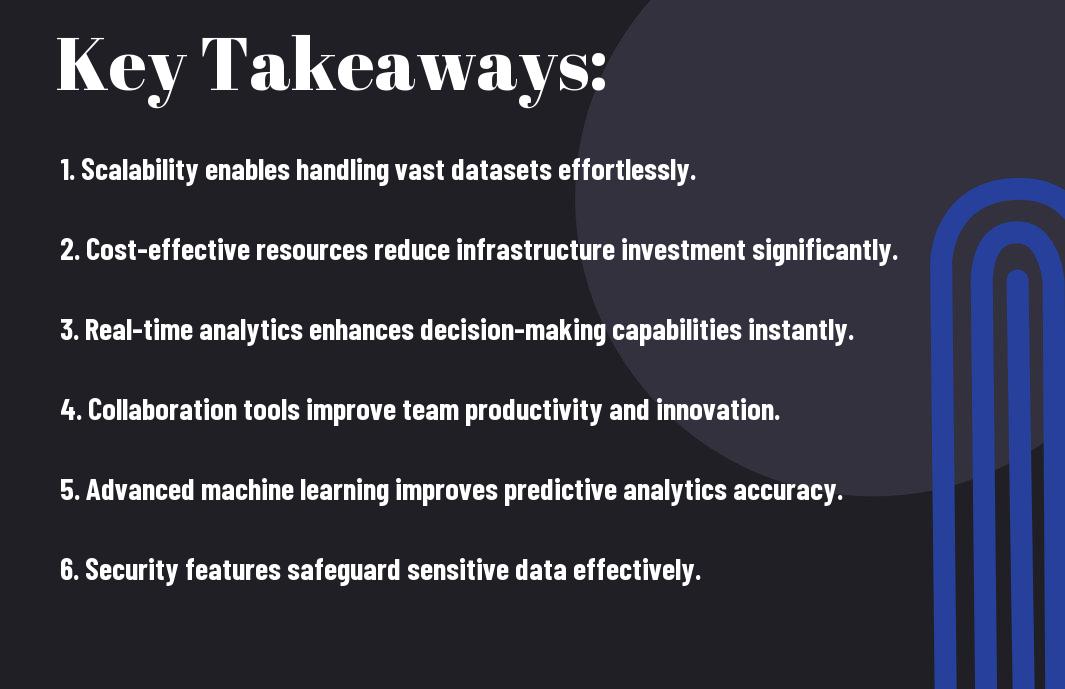

A: Cloud technologies offer numerous benefits for data engineering and analytics, including scalability, flexibility, cost-effectiveness, and accessibility. Organizations can easily scale their data infrastructure to handle large volumes of data without significant upfront investment. The pay-as-you-go model allows companies to manage costs effectively while accessing a vast array of powerful tools and services. Additionally, cloud platforms enable collaborative work as data and analytics can be accessed from anywhere, fostering innovation and facilitating remote work.

Q2: How do I choose the right cloud service provider for my data engineering needs?

A: Choosing the right cloud service provider requires careful consideration of several factors, including the specific features and tools offered, security protocols, compliance with industry regulations, performance, and pricing. Organizations should assess their data needs and project requirements, as well as evaluate the provider’s track record in data engineering services. Engaging in trials and leveraging case studies or testimonials can also help in making an informed decision.

Q3: What role do data lakes and data warehouses play in cloud data engineering?

A: Data lakes and data warehouses are vital components of cloud data engineering architectures. Data lakes are designed to store vast amounts of unstructured data in its native format, allowing for flexible data processing and analytics. In contrast, data warehouses store structured data and facilitate high-performance analytics and reporting. When harnessing cloud technologies, organizations can leverage hybrid models that combine both, taking advantage of the strengths of each approach to support diverse data analytics needs.

Q4: What types of tools are commonly used in cloud-based data analytics?

A: There are several tools used in cloud-based data analytics, including data integration tools (like Apache Nifi and Talend), data visualization tools (such as Tableau and Power BI), and advanced analytics platforms (like Amazon SageMaker and Google AI Platform). Additionally, cloud providers typically offer services for data storage (e.g., Amazon S3, Azure Blob Storage), databases (like Amazon Redshift and Google BigQuery), and orchestration (using tools like Apache Airflow). Selecting the right combination of tools is crucial for designing an efficient data analytics workflow.

Q5: How can organizations ensure data security and compliance when using cloud technologies?

A: To ensure data security and compliance when utilizing cloud technologies, organizations should implement multi-layered security strategies that include encryption of data at rest and in transit, along with identity and access management (IAM) protocols. Regular audits, vulnerability assessments, and compliance certifications (e.g., GDPR, HIPAA) should be adhered to. Additionally, organizations should leverage security features provided by cloud service providers, such as logging and monitoring services, to track data access and detect potential breaches in real-time.